Try in Colab Use the W&B OpenAI API integration to log requests, responses, token counts and model metadata for all OpenAI models, including fine-tuned models.Documentation Index

Fetch the complete documentation index at: https://docs.wandb.ai/llms.txt

Use this file to discover all available pages before exploring further.

See the OpenAI fine-tuning integration to learn how to use W&B to track your fine-tuning experiments, models, and datasets and share your results with your colleagues.

Install OpenAI Python API library

The W&B autolog integration works with OpenAI version 0.28.1 and below. To install OpenAI Python API version 0.28.1, run:Use the OpenAI Python API

1. Import autolog and initialise it

First, importautolog from wandb.integration.openai and initialise it.

wandb.init() accepts to autolog. This includes a project name, team name, entity, and more. For more information about wandb.init(), see the API Reference Guide.

2. Call the OpenAI API

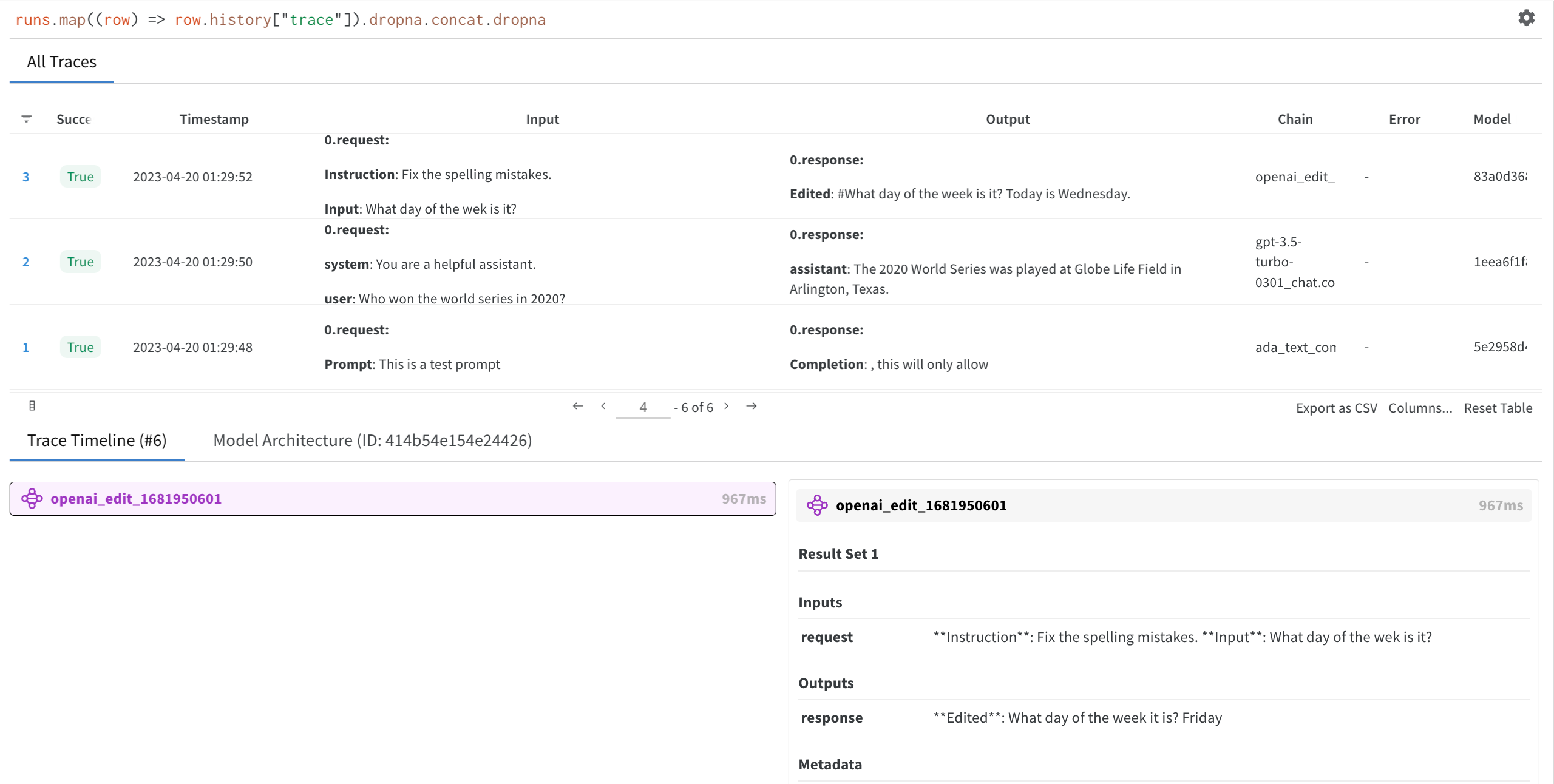

Each call you make to the OpenAI API is now logged to W&B automatically.3. View your OpenAI API inputs and responses

Click on the W&B run link generated byautolog in step 1. This redirects you to your project workspace in the W&B App.

Select a run you created to view the trace table, trace timeline and the model architecture of the OpenAI LLM used.

Turn off autolog

W&B recommends that you calldisable() to close all W&B processes when you are finished using the OpenAI API.