What this notebook covers

We show you how to integrate W&B with your PyTorch code to add experiment tracking to your pipeline.

Install, import, and log in

Step 0: Install W&B

To get started, we’ll need to get the library.wandb is easily installed using pip.

Step 1: Import W&B and Login

In order to log data to our web service, you’ll need to log in. If this is your first time using W&B, you’ll need to sign up for a free account at the link that appears.Define the experiment and pipeline

Track metadata and hyperparameters with wandb.init()

Programmatically, the first thing we do is define our experiment:

what are the hyperparameters? what metadata is associated with this run?

It’s a pretty common workflow to store this information in a config dictionary

(or similar object)

and then access it as needed.

For this example, we’re only letting a few hyperparameters vary

and hand-coding the rest.

But any part of your model can be part of the config.

We also include some metadata: we’re using the MNIST dataset and a convolutional

architecture. If we later work with, say,

fully connected architectures on CIFAR in the same project,

this will help us separate our runs.

- we first

makea model, plus associated data and optimizer, then - we

trainthe model accordingly and finally testit to see how training went.

wandb.init().

Calling this function sets up a line of communication

between your code and our servers.

Passing the config dictionary to wandb.init()

immediately logs all that information to us,

so you’ll always know what hyperparameter values

you set your experiment to use.

To ensure the values you chose and logged are always the ones that get used

in your model, we recommend using the run.config copy of your object.

Check the definition of make below to see some examples.

Side Note: We take care to run our code in separate processes,

so that any issues on our end

(such as if a giant sea monster attacks our data centers)

don’t crash your code.

Once the issue is resolved, such as when the Kraken returns to the deep,

you can log the data with wandb sync.

Define the data loading and model

Now, we need to specify how the data is loaded and what the model looks like. This part is very important, but it’s no different from what it would be withoutwandb,

so we won’t dwell on it.

wandb,

so we’re gonna stick with a standard ConvNet architecture.

Don’t be afraid to mess around with this and try some experiments —

all your results will be logged on wandb.ai.

Define training logic

Moving on in ourmodel_pipeline, it’s time to specify how we train.

Two wandb functions come into play here: watch and log.

Track gradients with run.watch() and everything else with run.log()

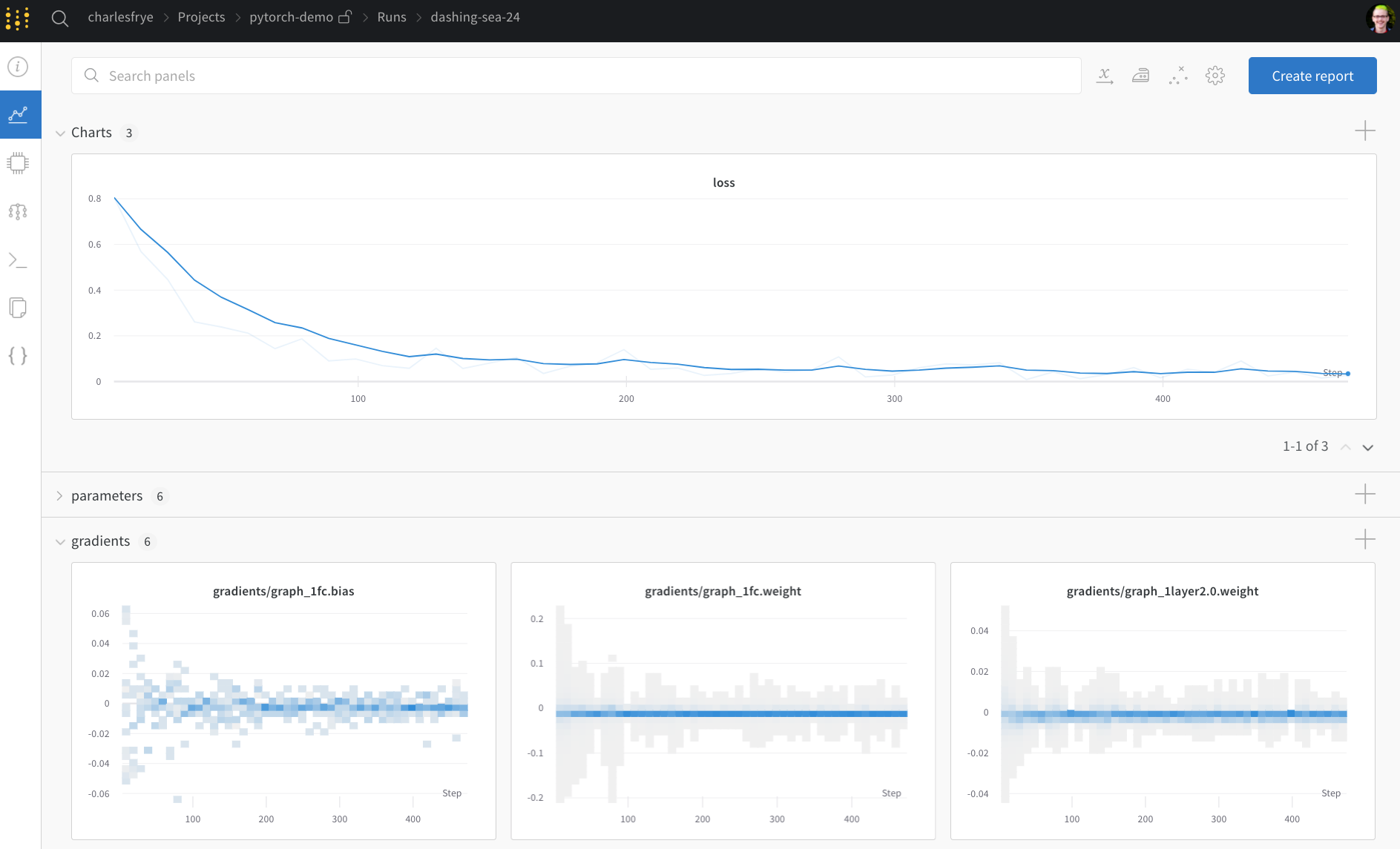

run.watch() will log the gradients and the parameters of your model,

every log_freq steps of training.

All you need to do is call it before you start training.

The rest of the training code remains the same:

we iterate over epochs and batches,

running forward and backward passes

and applying our optimizer.

run.log().

run.log() expects a dictionary with strings as keys.

These strings identify the objects being logged, which make up the values.

You can also optionally log which step of training you’re on.

Side Note: I like to use the number of examples the model has seen,

since this makes for easier comparison across batch sizes,

but you can use raw steps or batch count. For longer training runs, it can also make sense to log by epoch.

Define testing logic

Once the model is done training, we want to test it: run it against some fresh data from production, perhaps, or apply it to some hand-curated examples.(Optional) Call run.save()

This is also a great time to save the model’s architecture

and final parameters to disk.

For maximum compatibility, we’ll export our model in the

Open Neural Network eXchange (ONNX) format.

Passing that filename to run.save() ensures that the model parameters

are saved to W&B’s servers: no more losing track of which .h5 or .pb

corresponds to which training runs.

For more advanced wandb features for storing, versioning, and distributing

models, check out our Artifacts tools.

Run training and watch your metrics live on wandb.ai

Now that we’ve defined the whole pipeline and slipped in those few lines of W&B code, we’re ready to run our fully tracked experiment. We’ll report a few links to you: our documentation, the Project page, which organizes all the runs in a project, and the Run page, where this run’s results will be stored. Navigate to the Run page and check out these tabs:- Charts, where the model gradients, parameter values, and loss are logged throughout training

- System, which contains a variety of system metrics, including Disk I/O utilization, CPU and GPU metrics (watch that temperature soar), and more

- Logs, which has a copy of anything pushed to standard out during training

- Files, where, once training is complete, you can click on the

model.onnxto view our network with the Netron model viewer.

with wandb.init() block exits,

we’ll also print a summary of the results in the cell output.

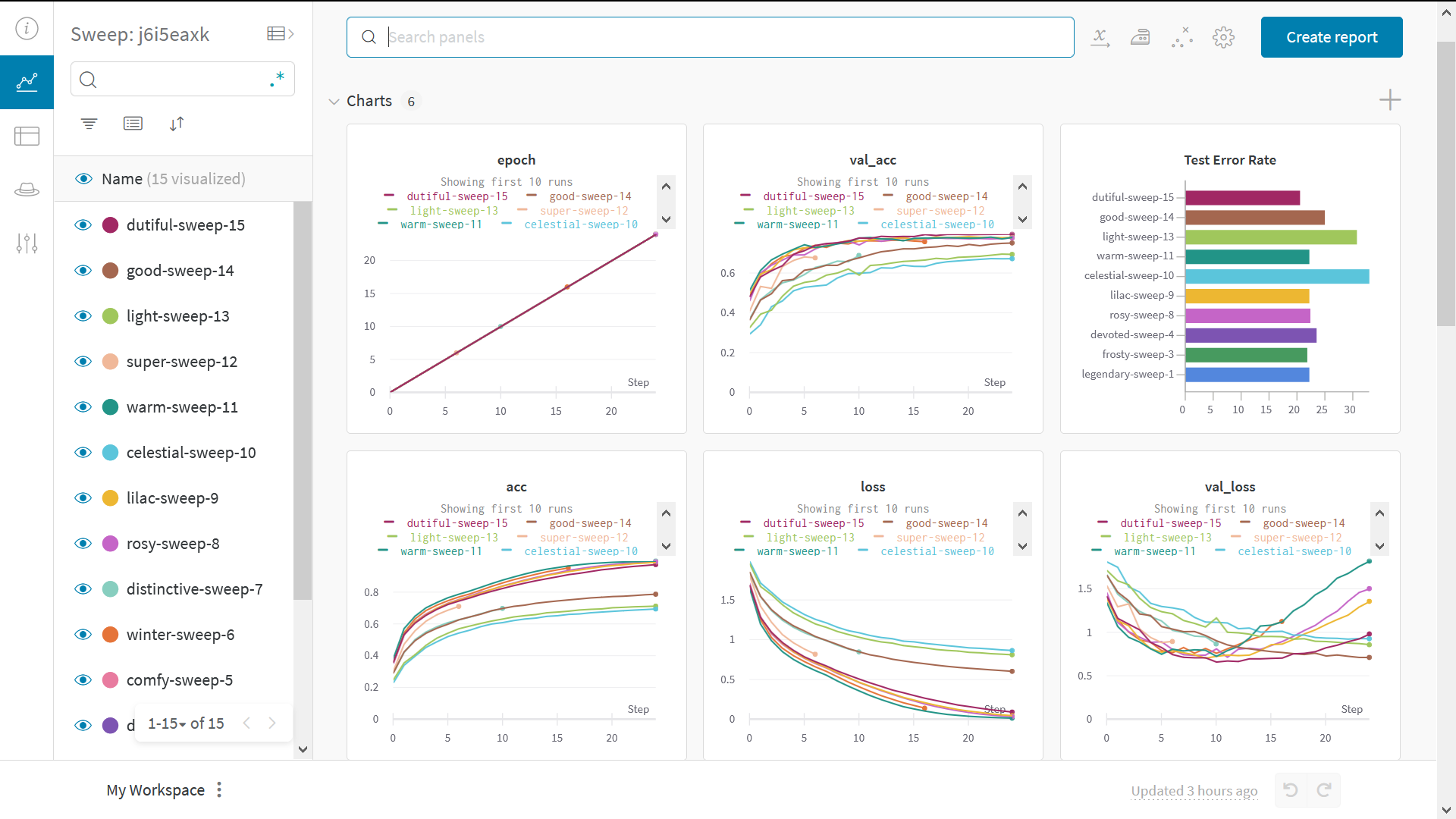

Test Hyperparameters with Sweeps

We only looked at a single set of hyperparameters in this example. But an important part of most ML workflows is iterating over a number of hyperparameters. You can use W&B Sweeps to automate hyperparameter testing and explore the space of possible models and optimization strategies. Check out a Colab notebook demonstrating hyperparameter optimization using W&B Sweeps. Running a hyperparameter sweep with W&B is very easy. There are just 3 simple steps:- Define the sweep: We do this by creating a dictionary or a YAML file that specifies the parameters to search through, the search strategy, the optimization metric et all.

-

Initialize the sweep:

sweep_id = wandb.sweep(sweep_config) -

Run the sweep agent:

wandb.agent(sweep_id, function=train)

Example gallery

Explore examples of projects tracked and visualized with W&B in our Gallery →.Advanced setup

- Environment variables: Set API keys in environment variables so you can run training on a managed cluster.

- Offline mode: Use

dryrunmode to train offline and sync results later. - On-prem: Install W&B in a private cloud or air-gapped servers in your own infrastructure. We have local installations for everyone from academics to enterprise teams.

- Sweeps: Set up hyperparameter search quickly with our lightweight tool for tuning.