Try in Colab TheDocumentation Index

Fetch the complete documentation index at: https://docs.wandb.ai/llms.txt

Use this file to discover all available pages before exploring further.

wandb library includes a special callback for LightGBM. It’s also easy to use the generic logging features of W&B to track large experiments, like hyperparameter sweeps.

Looking for working code examples? Check out our repository of examples on GitHub.

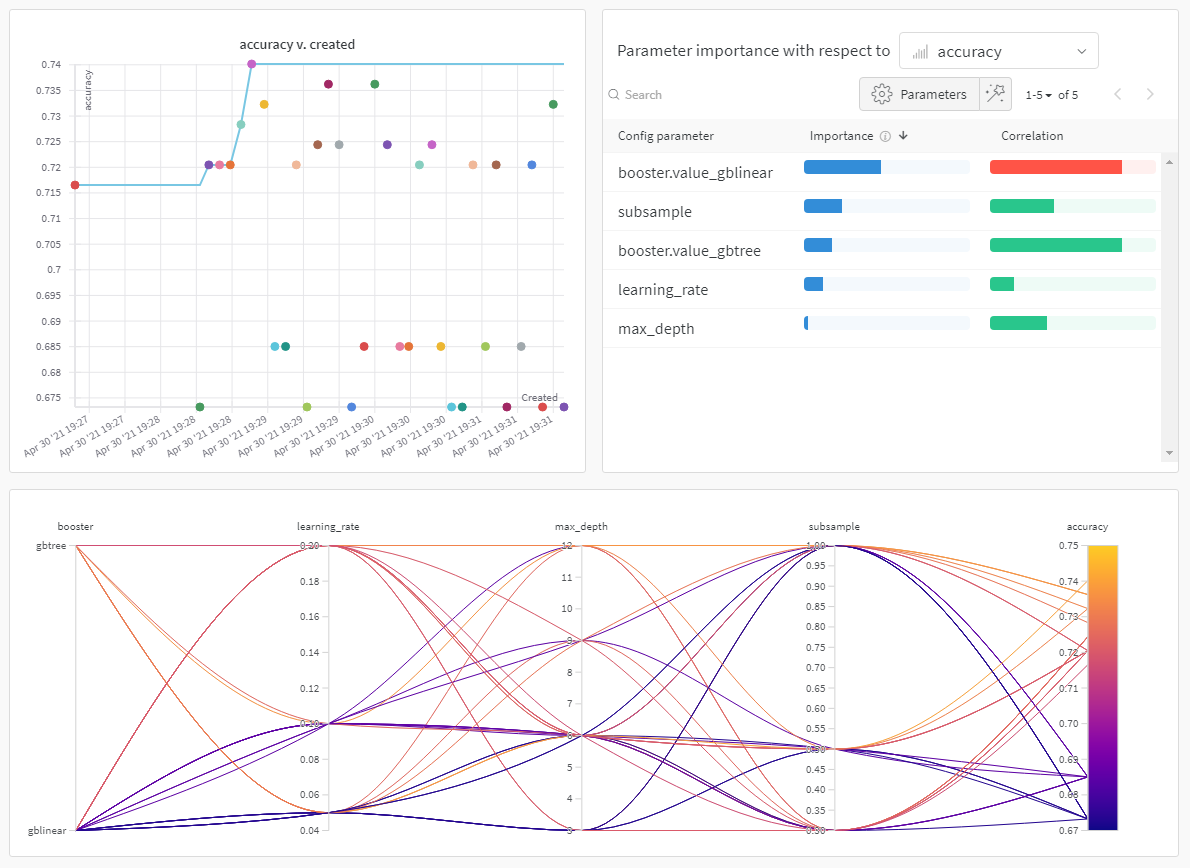

Tuning your hyperparameters with Sweeps

Attaining the maximum performance out of models requires tuning hyperparameters, like tree depth and learning rate. W&B Sweeps is a powerful toolkit for configuring, orchestrating, and analyzing large hyperparameter testing experiments. To learn more about these tools and see an example of how to use Sweeps with XGBoost, check out this interactive Colab notebook. Try in Colab