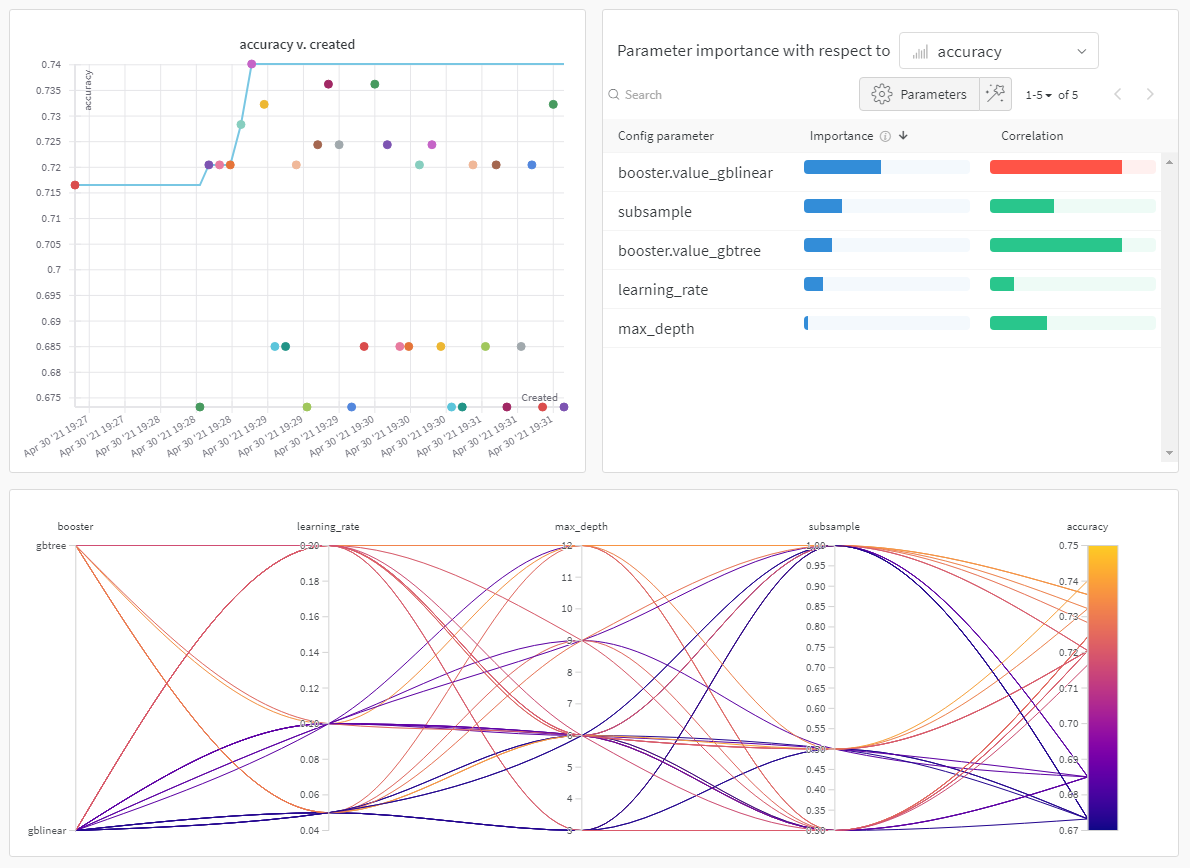

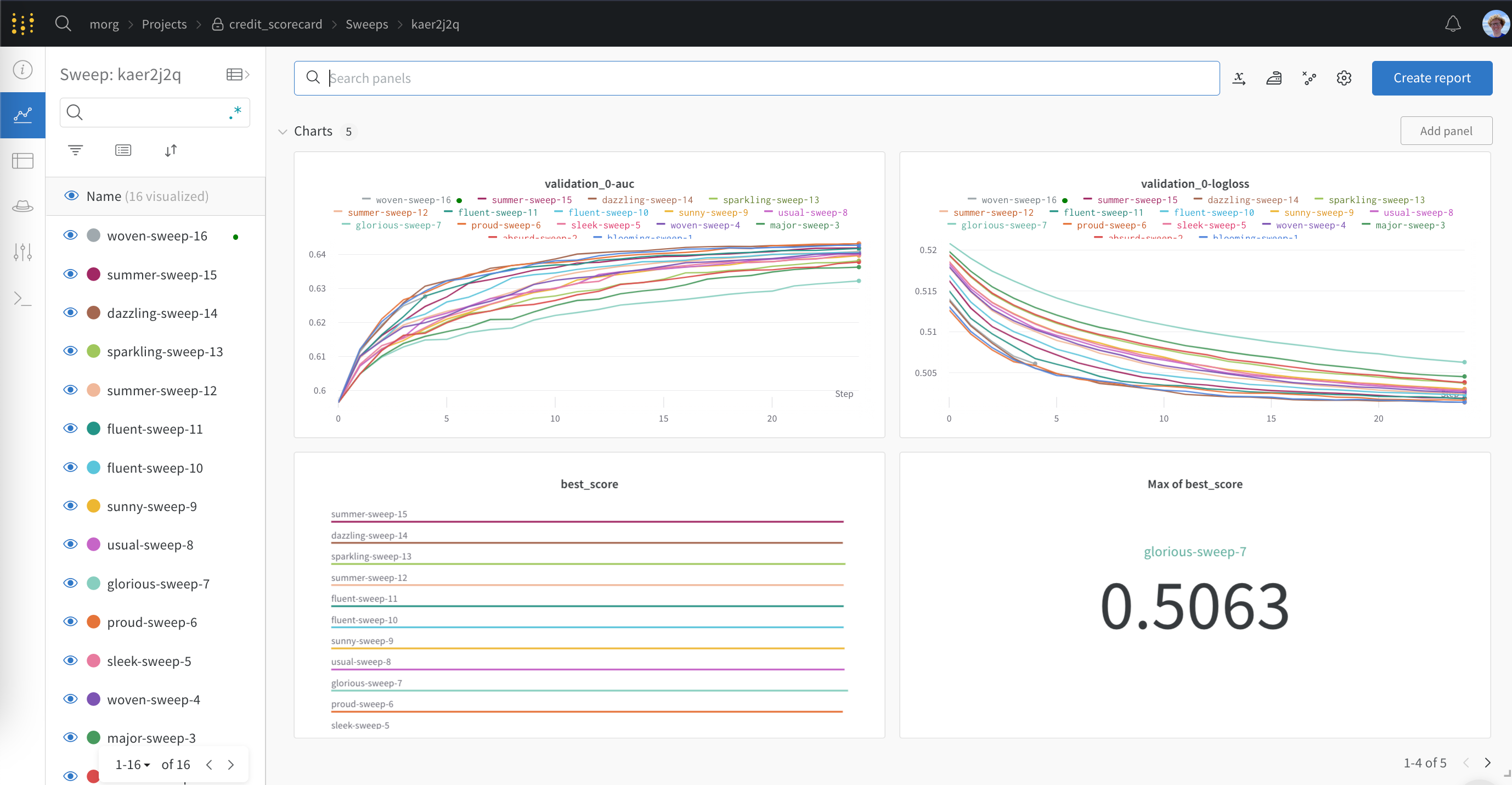

wandb library has a WandbCallback callback for logging metrics, configs and saved boosters from training with XGBoost. Here you can see a live W&B Dashboard with outputs from the XGBoost WandbCallback.

Get started

Logging XGBoost metrics, configs and booster models to W&B is as easy as passing theWandbCallback to XGBoost:

WandbCallback reference

Functionality

PassingWandbCallback to a XGBoost model will:

- log the booster model configuration to W&B

- log evaluation metrics collected by XGBoost, such as rmse, accuracy etc to W&B

- log training metrics collected by XGBoost (if you provide data to eval_set)

- log the best score and the best iteration

- save and upload your trained model to W&B Artifacts (when

log_model = True) - log feature importance plot when

log_feature_importance=True(default). - Capture the best eval metric in

wandb.Run.summarywhendefine_metric=True(default).

Arguments

-

log_model: (boolean) if True save and upload the model to W&B Artifacts -

log_feature_importance: (boolean) if True log a feature importance bar plot -

importance_type: (str) one of{weight, gain, cover, total_gain, total_cover}for tree model. weight for linear model. -

define_metric: (boolean) if True (default) capture model performance at the best step, instead of the last step, of training in yourrun.summary.

Tune your hyperparameters with Sweeps

Attaining the maximum performance out of models requires tuning hyperparameters, like tree depth and learning rate. W&B Sweeps is a powerful toolkit for configuring, orchestrating, and analyzing large hyperparameter testing experiments. Try in Colab You can also try this XGBoost & Sweeps Python script.