- Track and visualize all ML experiments.

- Optimize and fine-tune models at scale with hyperparameter sweeps.

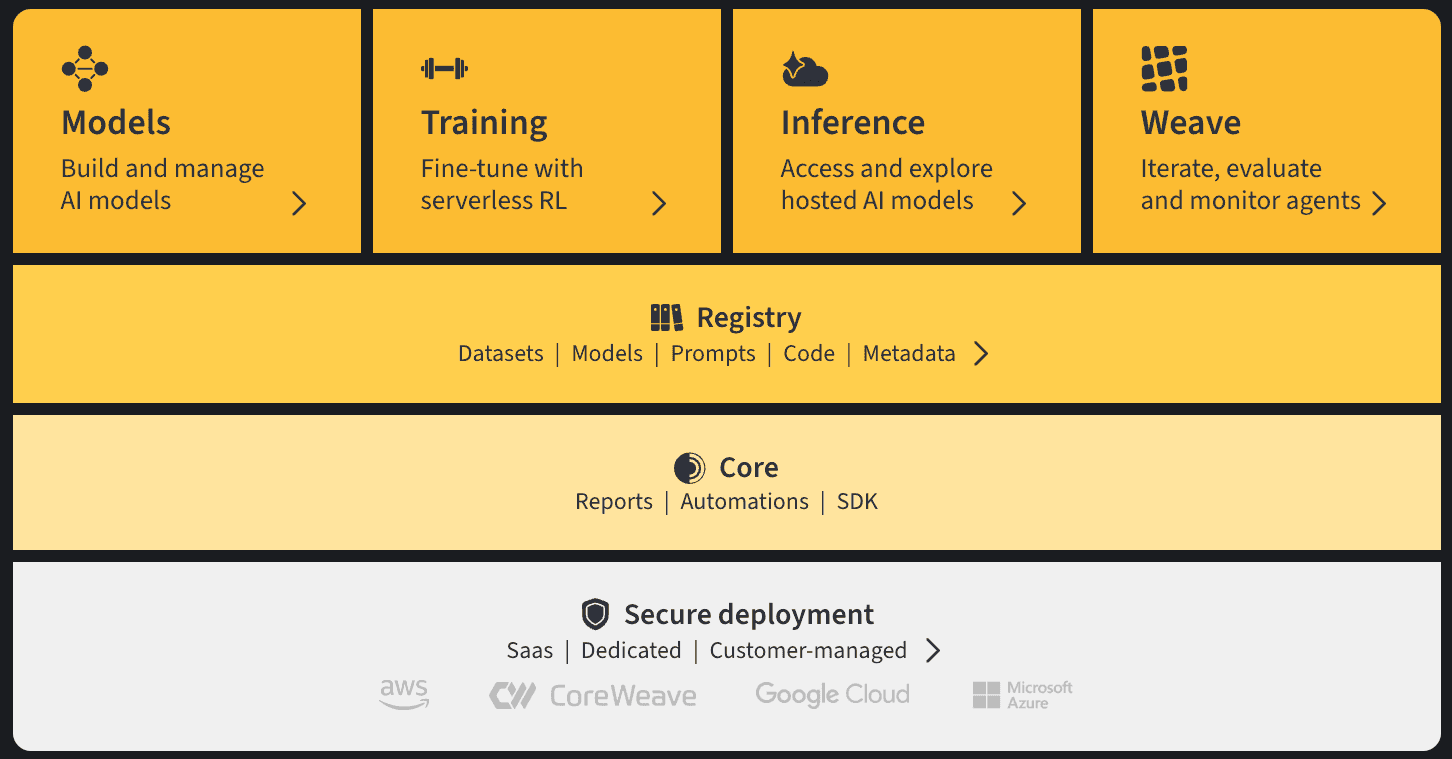

- Maintain a centralized hub of all models, with a seamless handoff point to devops and deployment

- Configure custom automations that trigger key workflows for model CI/CD.