The following sections highlight some of the ways you can use tables:Documentation Index

Fetch the complete documentation index at: https://docs.wandb.ai/llms.txt

Use this file to discover all available pages before exploring further.

View your data

Log metrics and rich media during model training or evaluation, then visualize results in a persistent database synced to the cloud, or to your hosting instance.

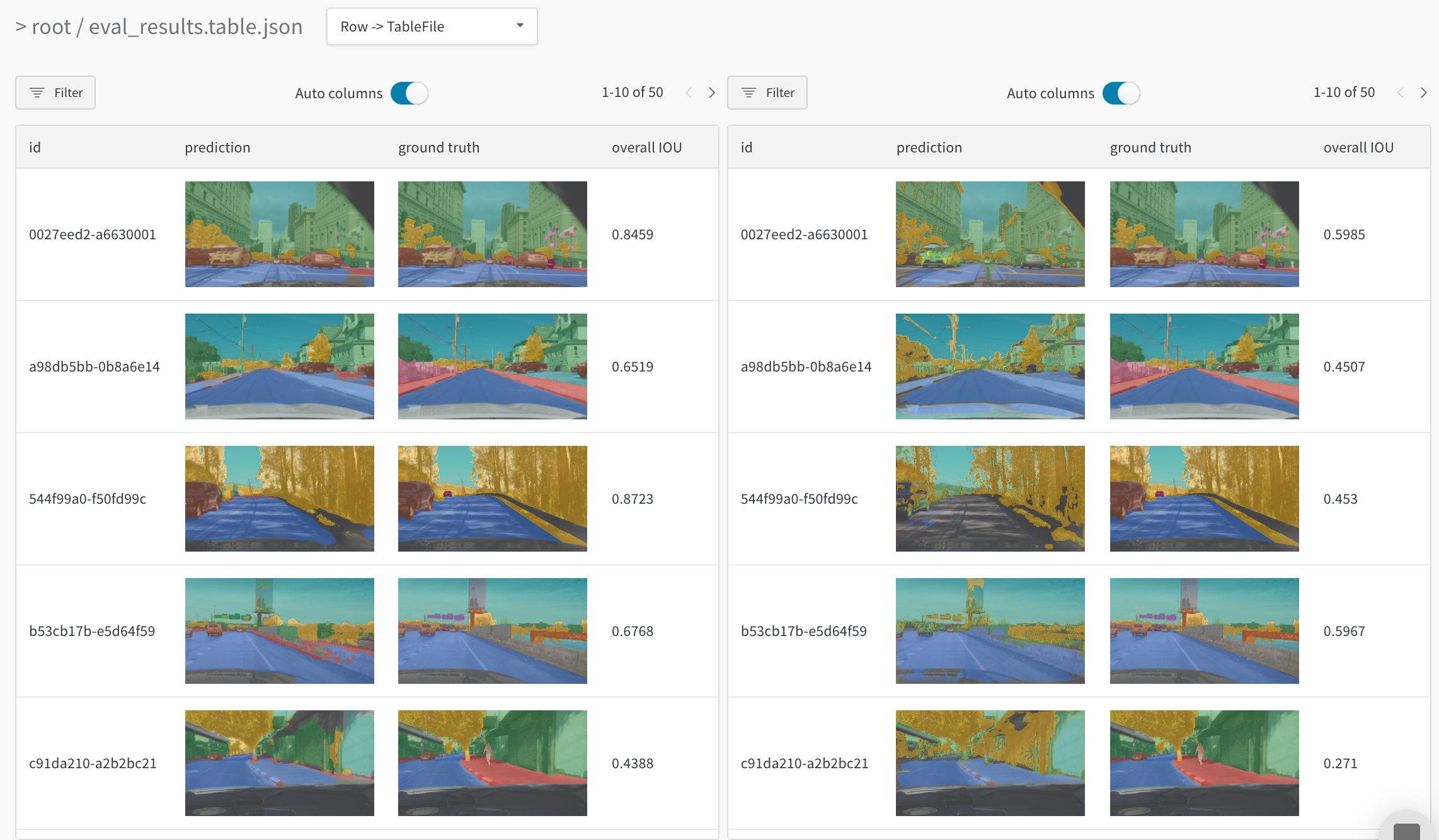

Interactively explore your data

View, sort, filter, group, join, and query tables to understand your data and model performance—no need to browse static files or rerun analysis scripts.

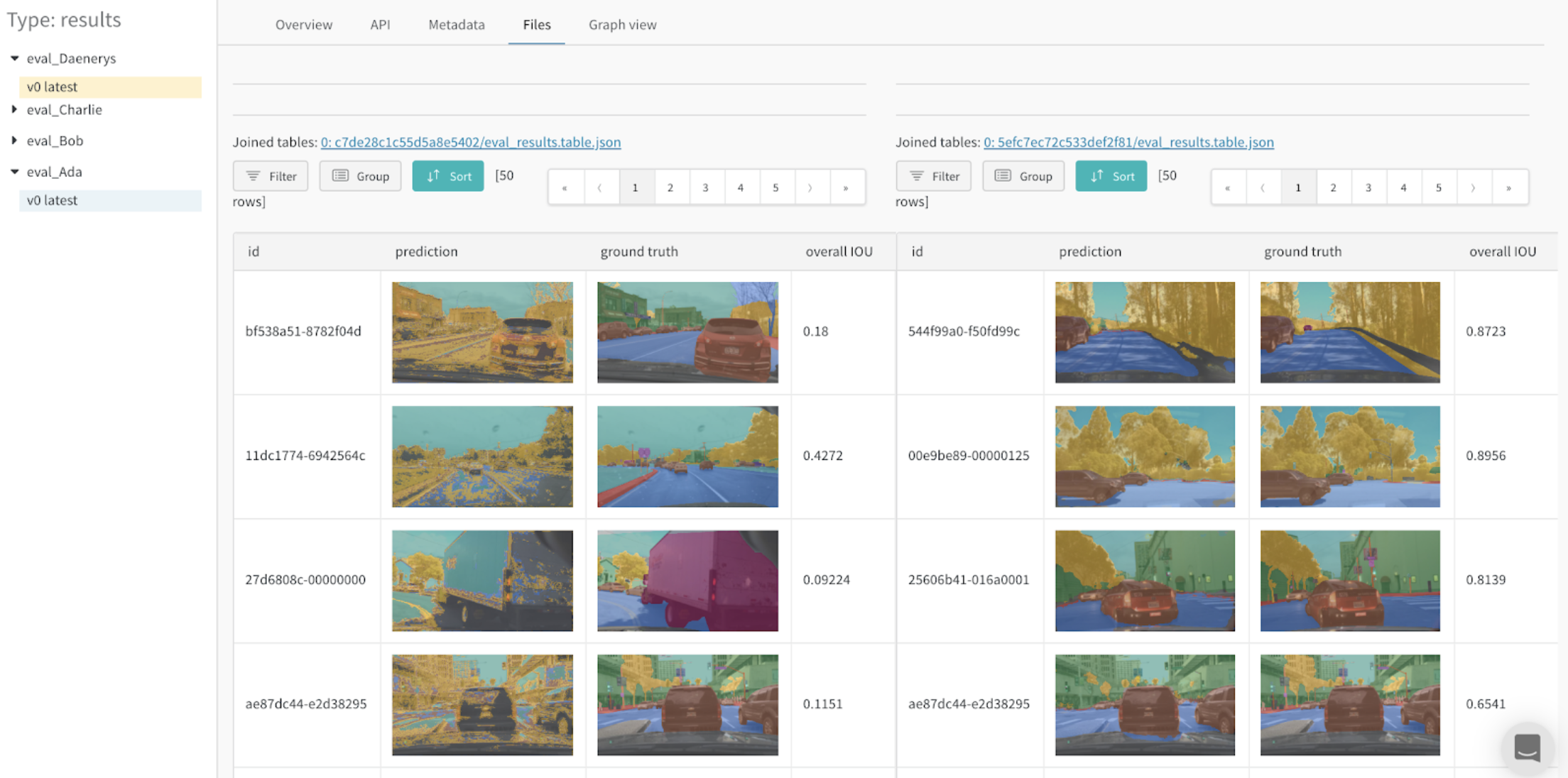

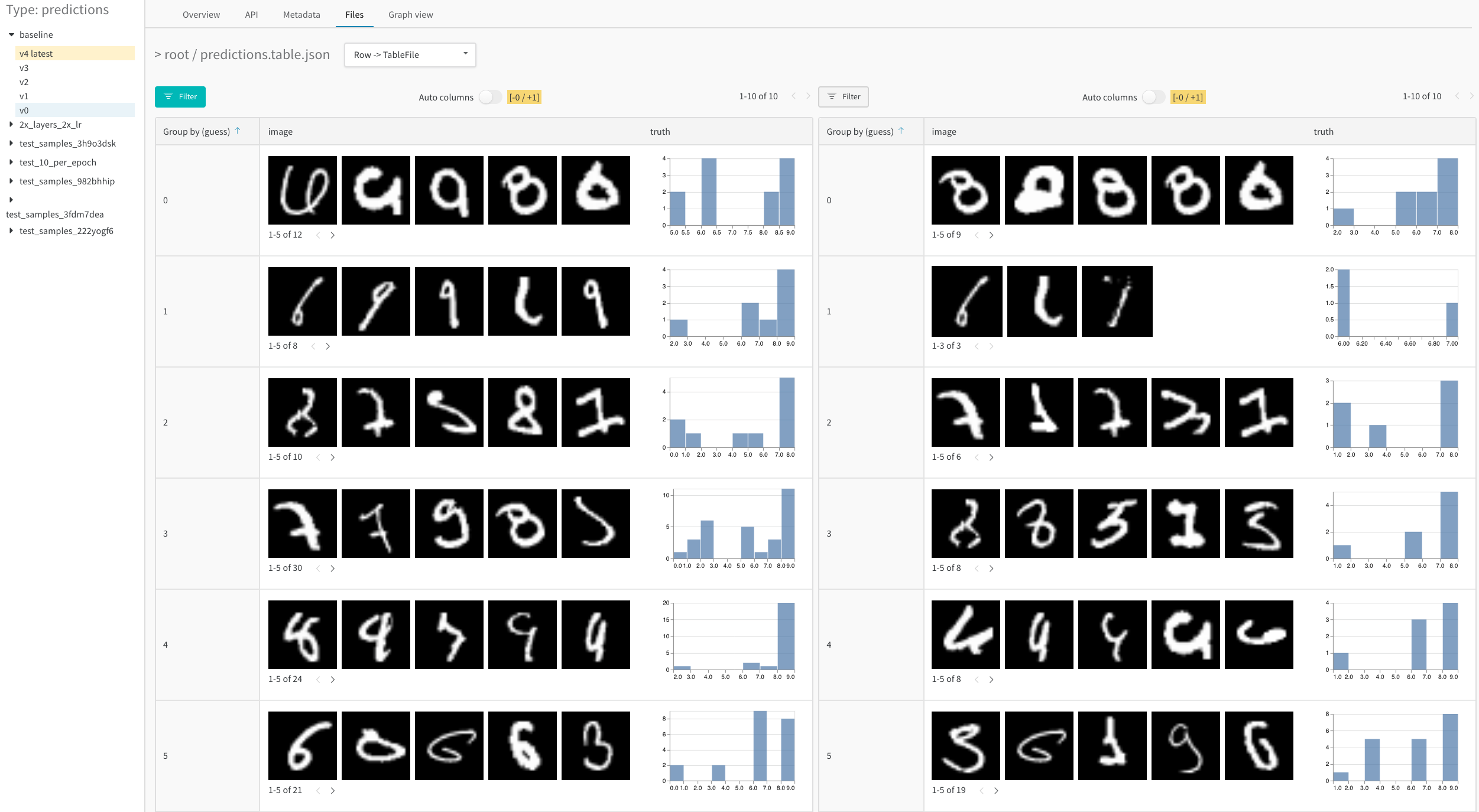

Compare model versions

Quickly compare results across different training epochs, datasets, hyperparameter choices, model architectures etc.

Track every detail and see the bigger picture

Zoom in to visualize a specific prediction at a specific step. Zoom out to see the aggregate statistics, identify patterns of errors, and understand opportunities for improvement. This tool works for comparing steps from a single model training, or results across different model versions.

Example Projects with W&B Tables

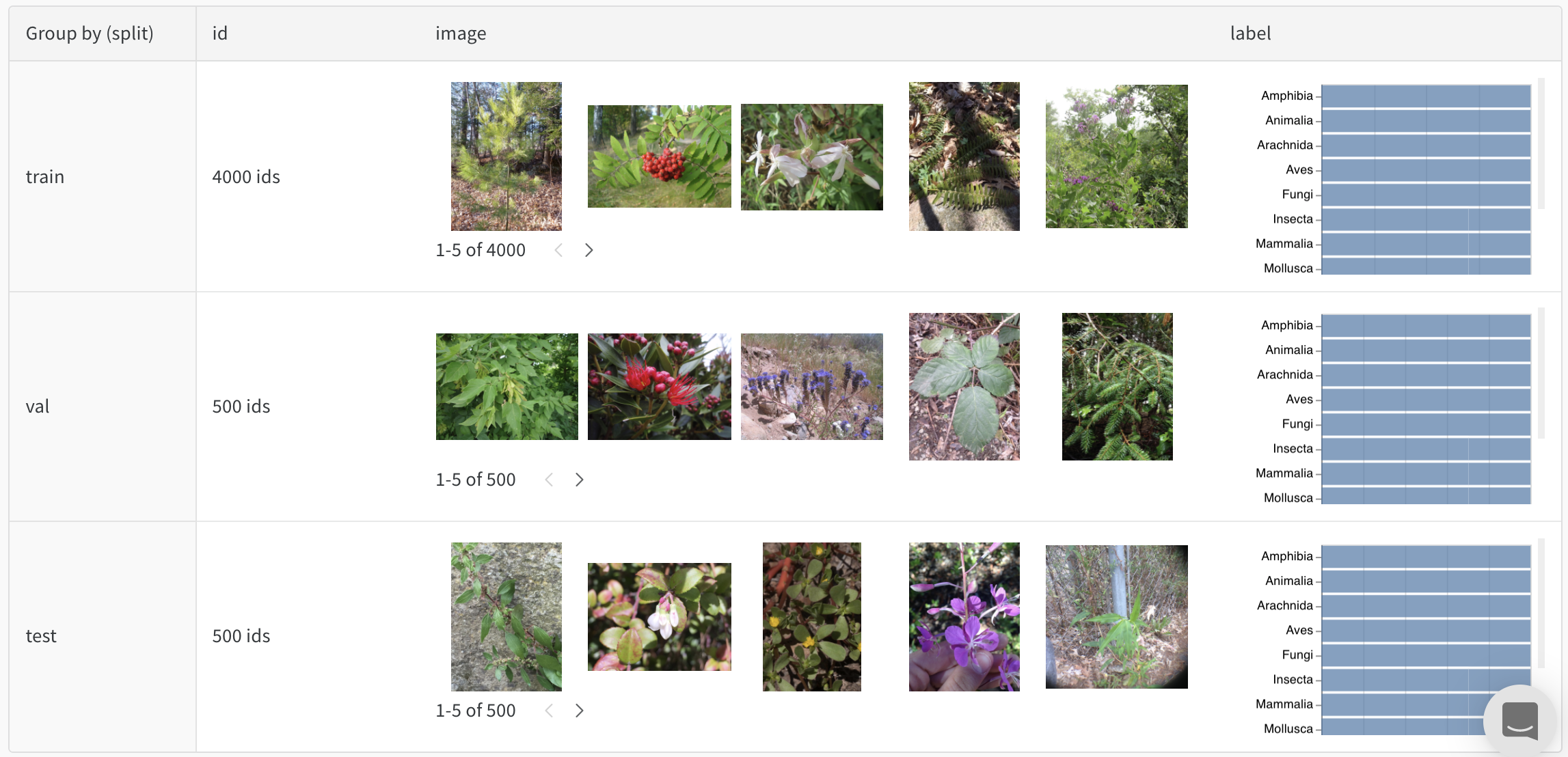

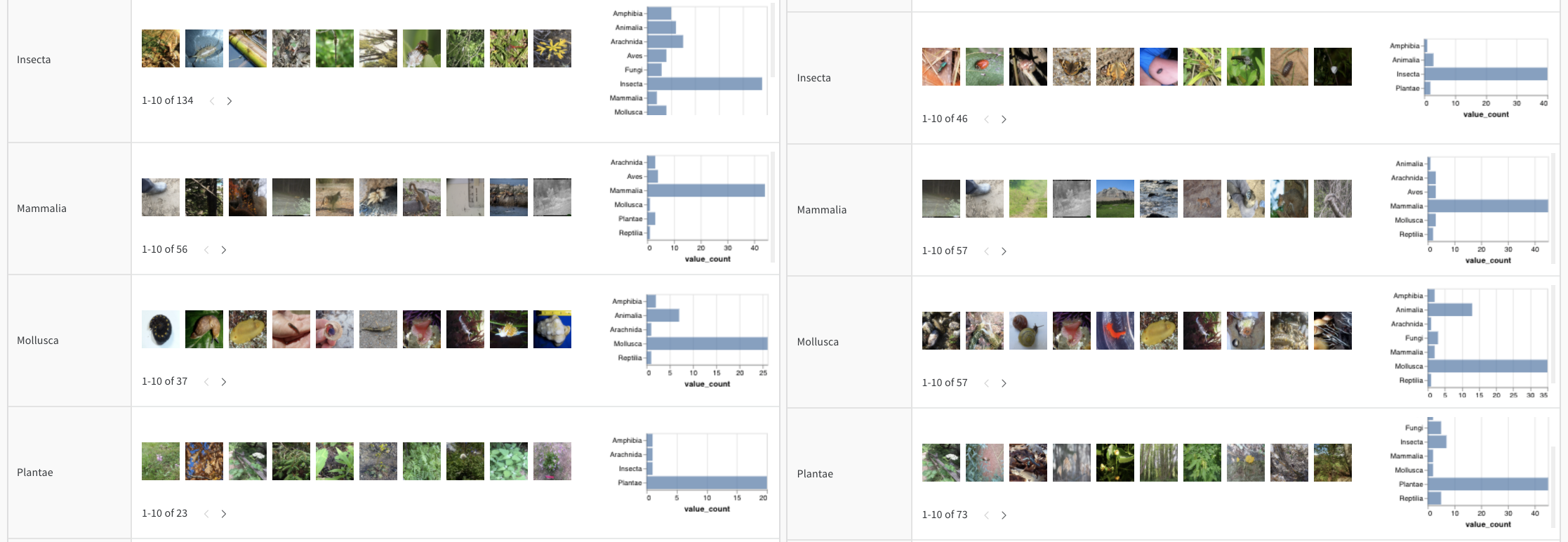

The following highlight some real W&B Projects that use W&B Tables.Image classification

Read Visualize Data for Image Classification, follow the data visualization nature Colab, or explore the artifacts context to see how a CNN identifies ten types of living things (plants, bird, insects, etc) from iNaturalist photos.

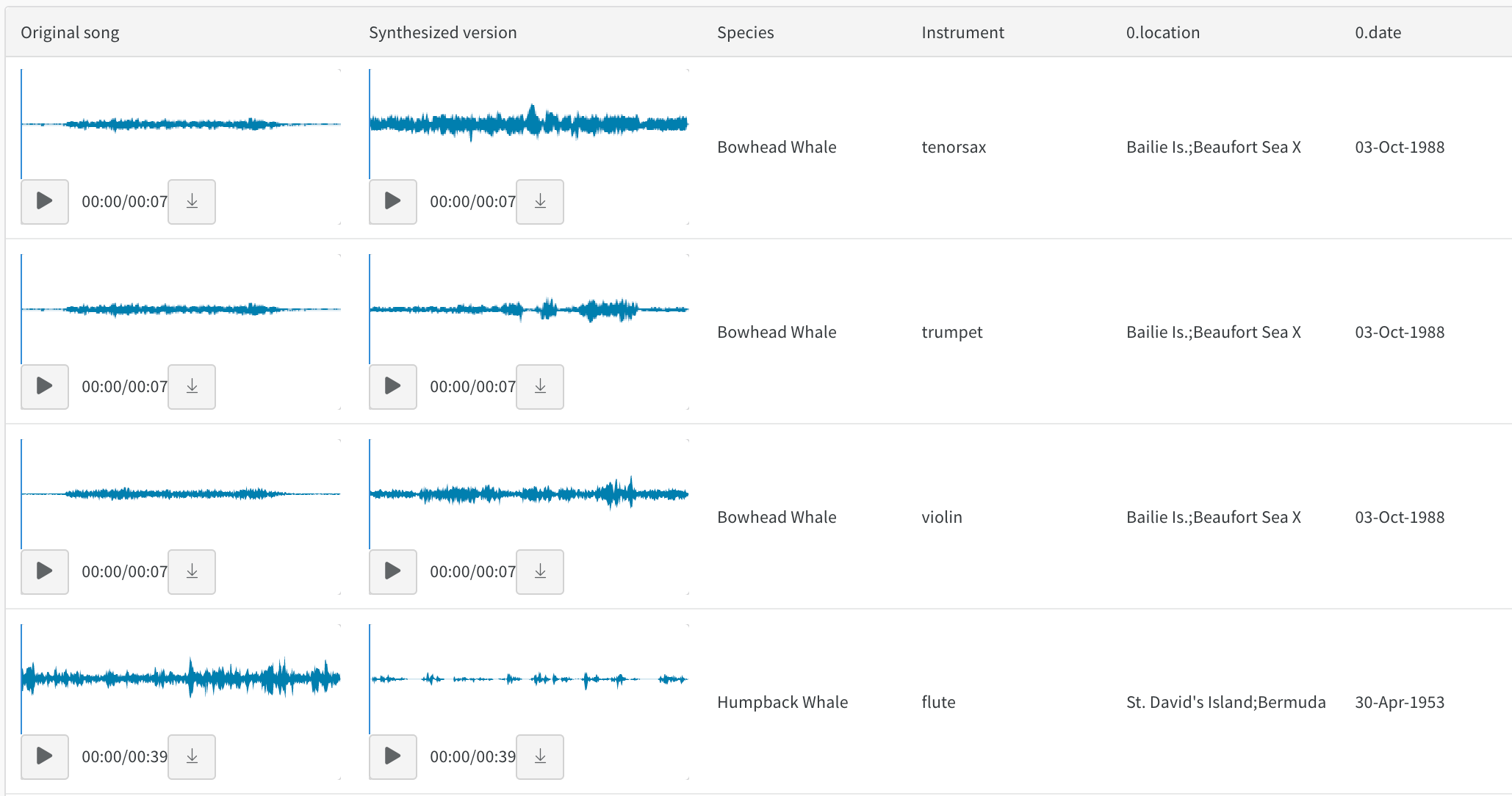

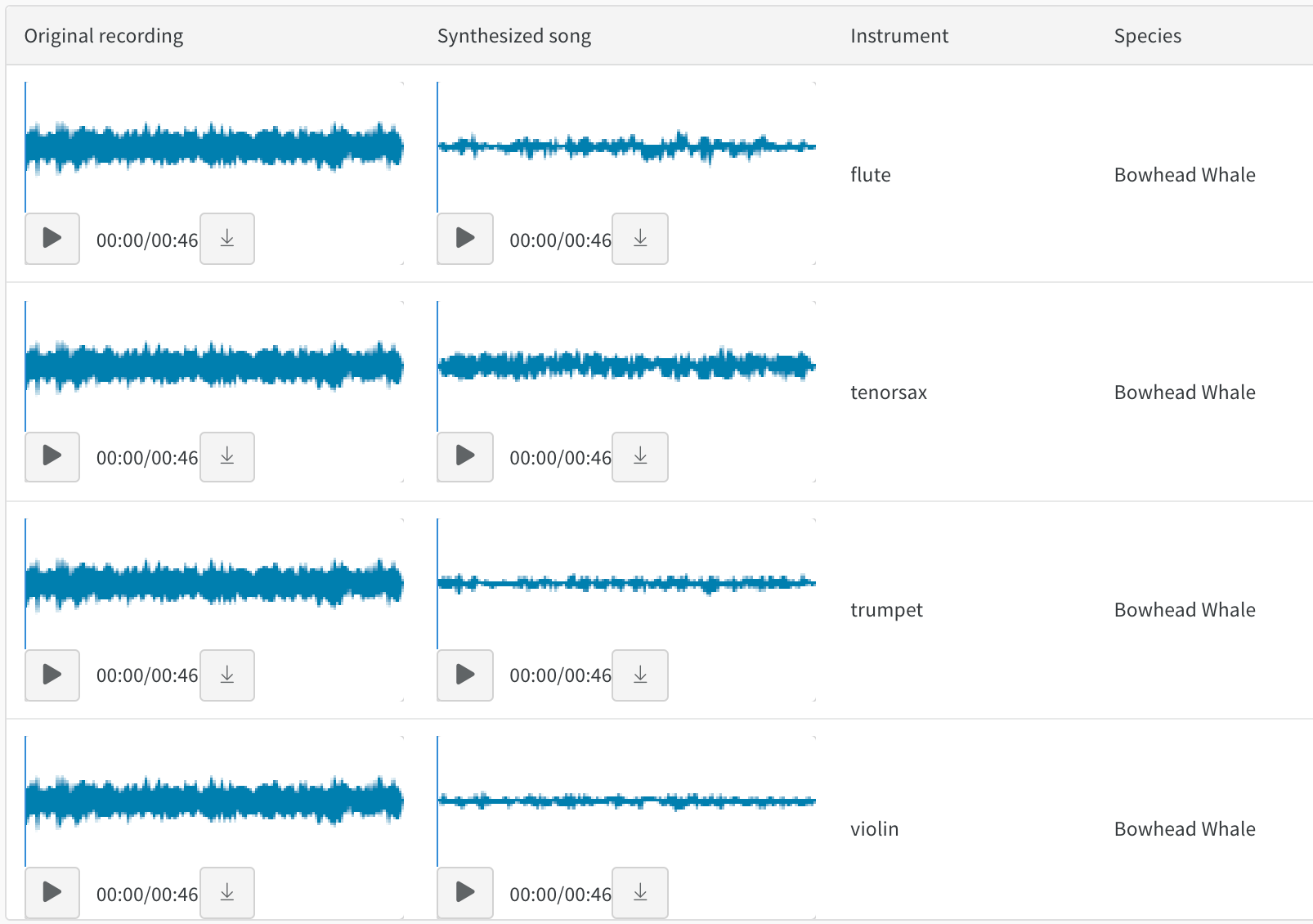

Audio

Interact with audio tables in Whale2Song - W&B Tables for Audio on timbre transfer. You can compare a recorded whale song with a synthesized rendition of the same melody on an instrument like violin or trumpet. You can also record your own songs and explore their synthesized versions in W&B with the audio transfer Colab.

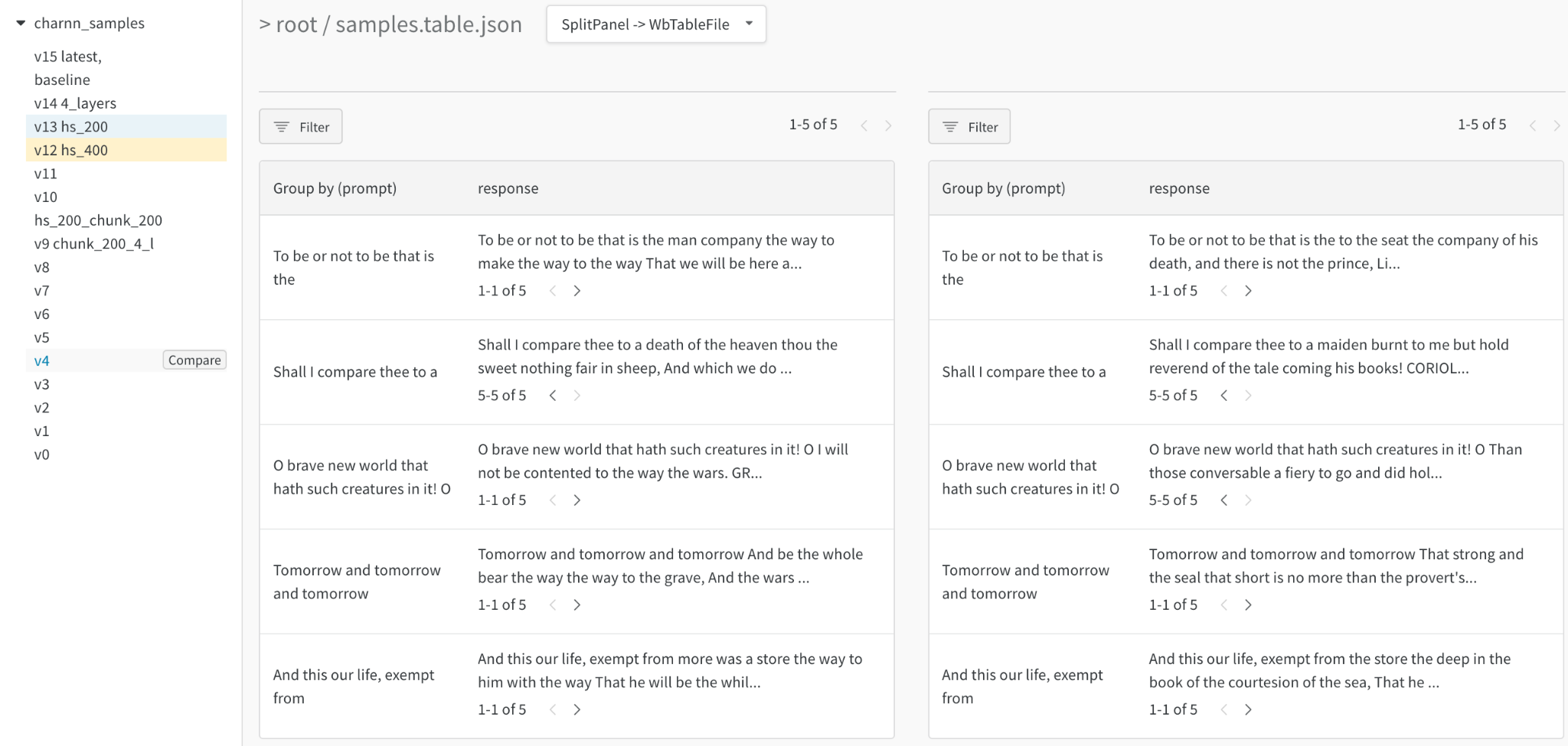

Text

Browse text samples from training data or generated output, dynamically group by relevant fields, and align your evaluation across model variants or experiment settings. Render text as Markdown or use visual diff mode to compare texts. See the Shakespeare text generation report for an example of a character-based RNN.

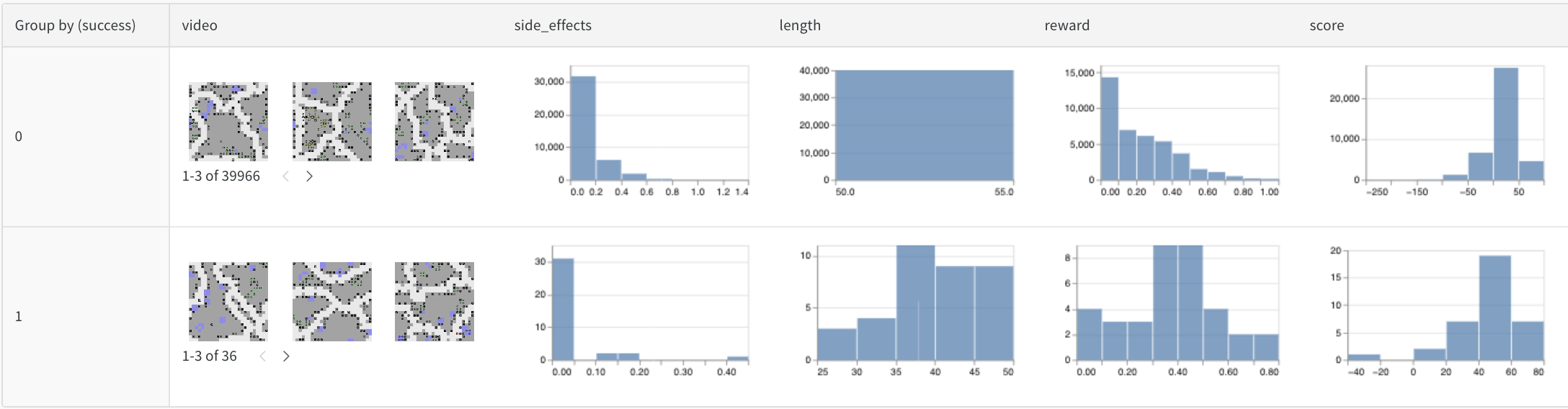

Video

Browse and aggregate over videos logged during training to understand your models. Here is an early example using the SafeLife benchmark for RL agents seeking to minimize side effects

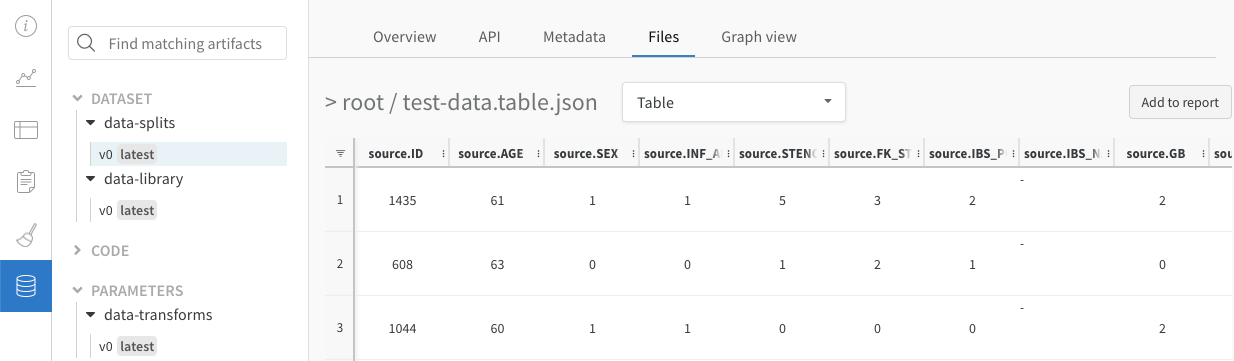

Tabular data

View a report on how to split and pre-process tabular data with version control and de-duplication.

Comparing model variants (semantic segmentation)

An interactive notebook and live example of logging Tables for semantic segmentation and comparing different models. Try your own queries in this Table.