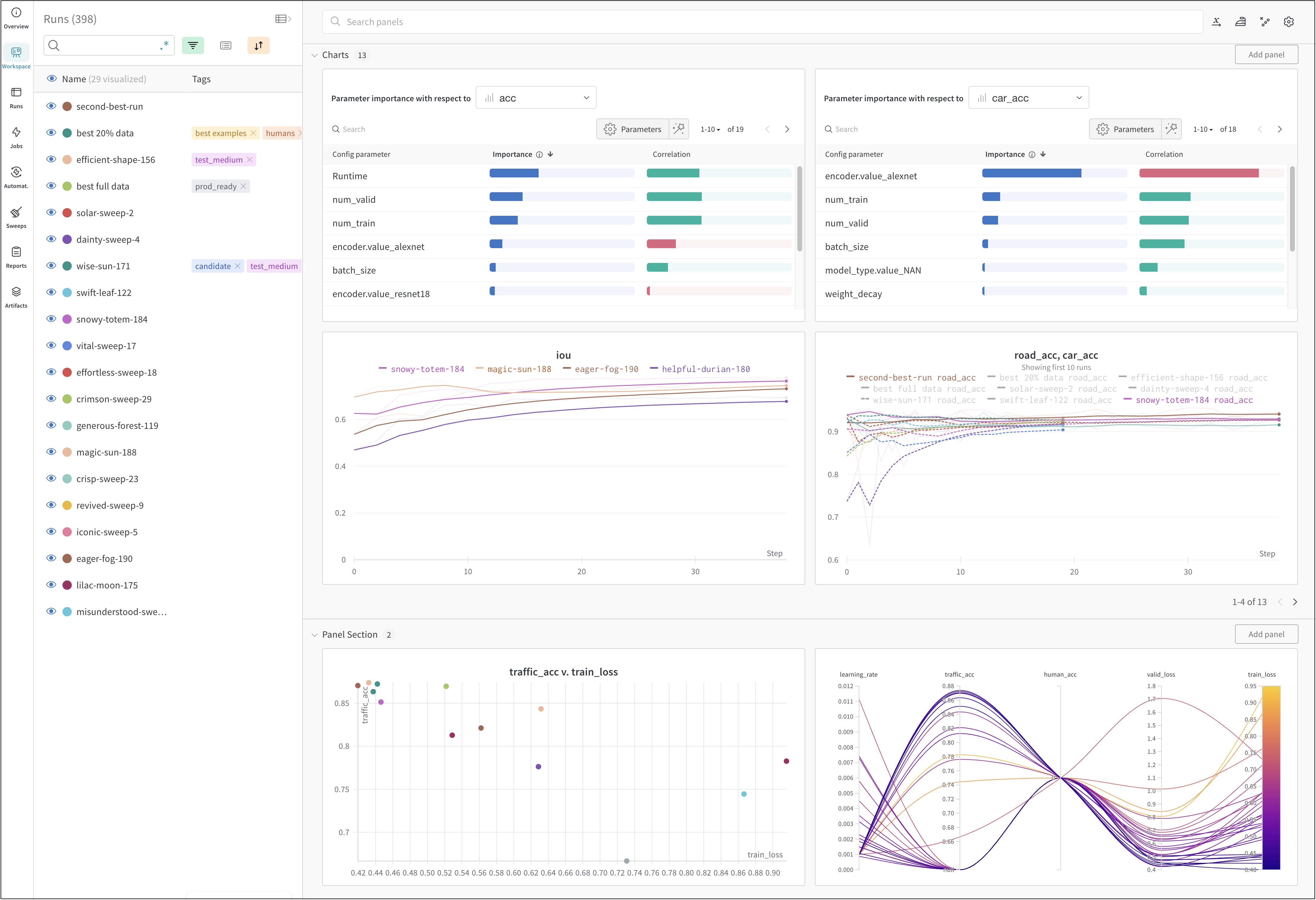

Track machine learning experiments with a few lines of code. You can then review the results in an interactive dashboard or export your data to Python for programmatic access using our Public API. Utilize W&B Integrations if you use popular frameworks such as Keras. See W&B Integrations for a full list of integrations and information on how to add W&B to your code.Documentation Index

Fetch the complete documentation index at: https://docs.wandb.ai/llms.txt

Use this file to discover all available pages before exploring further.

How it works

Track a machine learning experiment with a few lines of code:- Create a W&B Run.

- Store a dictionary of hyperparameters, such as learning rate or model type, into your configuration (

wandb.Run.config). - Log metrics (

wandb.Run.log()) over time in a training loop, such as accuracy and loss. - Save outputs of a run, like the model weights or a table of predictions.

Get started

Depending on your use case, explore the following resources to get started with W&B Experiments:- Read the W&B Quickstart for a step-by-step outline of the W&B Python SDK commands you could use to create, track, and use a dataset artifact.

- Explore this chapter to learn how to:

- Create an experiment

- Configure experiments

- Log data from experiments

- View results from experiments

- Explore the W&B Python Library within the W&B API Reference Guide.