Overview

The W&B Kubernetes Operator is the recommended way to deploy W&B Server on Kubernetes (cloud or on-premises). For an overview of the operator, why W&B uses it, and how configuration hierarchy works, see Self-Managed.

Before you begin

Before deploying W&B with the Kubernetes Operator, ensure your infrastructure meets all requirements:

- Review infrastructure requirements: See the Self-Managed infrastructure requirements page for comprehensive details on:

- Software version requirements (Kubernetes, MySQL, Redis, Helm)

- Hardware requirements (CPU architecture, sizing recommendations)

- Kubernetes cluster configuration

- Networking, SSL/TLS, and DNS requirements

- Obtain a W&B Server license: See the License section on the Requirements page.

- Provision external services: Set up MySQL, Redis, and object storage before deployment.

For additional context, see the reference architecture page.

MySQL Database

W&B requires an external MySQL database.

For production, W&B strongly recommends using managed database services:

Managed database services provide automated backups, monitoring, high availability, patching, and reduce operational overhead.

See the reference architecture for complete MySQL requirements, including sizing recommendations and configuration parameters. For database creation SQL, see the bare-metal guide. For questions about your deployment’s database configuration, contact support or your AISE.

For complete MySQL setup instructions including configuration parameters and database creation, see the MySQL section in the requirements page.

Redis

W&B depends on a single-node Redis 7.x deployment used by W&B’s components for job queuing and data caching. For convenience during testing and development of proofs of concept, W&B Self-Managed includes a local Redis deployment that is not appropriate for production deployments.

For production deployments, W&B can connect to a Redis instance in the following environments:

See the External Redis configuration section for details on how to configure an external Redis instance in Helm values.

Object storage

W&B requires object storage with pre-signed URL and CORS support.

Recommended storage providers:

MinIO Open Source is in maintenance mode with no active development or pre-compiled binaries. For production deployments, W&B recommends using managed object storage services or enterprise S3-compatible solutions such as MinIO Enterprise (AIStor). Provision your storage bucket

Before configuring W&B, provision your object storage bucket with proper IAM policies, CORS configuration, and access credentials.

See the Bring Your Own Bucket (BYOB) guide for detailed step-by-step provisioning instructions for:

- Amazon S3 (including IAM policies and bucket policies)

- Google Cloud Storage (including PubSub notifications)

- Azure Blob Storage (including managed identities)

- CoreWeave AI Object Storage

- S3-compatible storage (MinIO Enterprise, NetApp StorageGRID, and other enterprise solutions)

See the Object storage configuration section for details on how to configure object storage in Helm values.

OpenShift Kubernetes clusters

W&B supports deployment on OpenShift Kubernetes clusters in cloud, on-premises, and air-gapped environments.

W&B recommends you install with the official W&B Helm chart.

Run the container as an un-privileged user

By default, containers use a $UID of 999. Specify $UID >= 100000 and a $GID of 0 if your orchestrator requires the container run with a non-root user.

W&B must start as the root group ($GID=0) for file system permissions to function properly.

api:

install: true

image:

repository: wandb/megabinary

tag: 0.74.1 # Replace with your actual version

pod:

securityContext:

fsGroup: 10001

fsGroupChangePolicy: Always

runAsGroup: 0

runAsNonRoot: true

runAsUser: 10001

seccompProfile:

type: RuntimeDefault

container:

securityContext:

allowPrivilegeEscalation: false

capabilities:

drop:

- ALL

privileged: false

readOnlyRootFilesystem: false

app or console. For details, see Custom security context.

Deploy W&B Server application

The W&B Kubernetes Operator with Helm is the recommended installation method for all W&B Self-Managed deployments, including cloud, on-premises, and air-gapped environments.

W&B provides a Helm Chart to deploy the W&B Kubernetes operator to a Kubernetes cluster. This approach allows you to deploy W&B Server with Helm CLI or a continuous delivery tool like ArgoCD.For deployment-specific considerations, see Environment-specific considerations and Deploy with Terraform on public cloud. For disconnected environments, see Deploy on Air-Gapped Kubernetes.Follow these steps to install the W&B Kubernetes Operator with Helm CLI:

-

Add the W&B Helm repository. The W&B Helm chart is available in the W&B Helm repository:

helm repo add wandb https://charts.wandb.ai

helm repo update

-

Install the Operator on a Kubernetes cluster:

helm upgrade --install operator wandb/operator -n wandb-cr --create-namespace

-

Configure the W&B operator custom resource to trigger the W&B Server installation. Create a file named

operator.yaml with your W&B deployment configuration. Refer to Configuration Reference for all available options.

Here’s a minimal example configuration:

apiVersion: apps.wandb.com/v1

kind: WeightsAndBiases

metadata:

labels:

app.kubernetes.io/name: weightsandbiases

app.kubernetes.io/instance: wandb

name: wandb

namespace: default

spec:

values:

global:

host: https://<HOST_URI>

license: eyJhbGnUzaH...j9ZieKQ2x5GGfw

bucket:

<details depend on the provider>

mysql:

<redacted>

ingress:

annotations:

<redacted>

-

Start the Operator with your custom configuration so that it can install, configure, and manage the W&B Server application:

kubectl apply -f operator.yaml

-

To verify the installation using the web UI, create the first admin user account, then follow the verification steps outlined in Verify the installation.

Deploy W&B using Terraform for infrastructure-as-code deployments. Choose between:

- Helm Terraform Module: Deploys the operator to existing Kubernetes infrastructure

- Cloud Terraform Modules: Complete infrastructure + application deployment for AWS, Google Cloud, and Azure

For deployment-specific considerations, see Environment-specific considerations and Deploy with Terraform on public cloud. For disconnected environments, see Deploy on Air-Gapped Kubernetes.This method allows for customized deployments tailored to specific requirements, leveraging Terraform’s infrastructure-as-code approach for consistency and repeatability. The official W&B Helm-based Terraform Module is located here.Use the following code as a starting point. It includes all necessary configuration options for a production grade deployment:module "wandb" {

source = "wandb/wandb/helm"

spec = {

values = {

global = {

host = "https://<HOST_URI>"

license = "eyJhbGnUzaH...j9ZieKQ2x5GGfw"

bucket = {

<details depend on the provider>

}

mysql = {

<redacted>

}

}

ingress = {

annotations = {

"a" = "b"

"x" = "y"

}

}

}

}

}

Verify the installation

To verify the installation, W&B recommends using the W&B CLI. The verify command executes several tests that verify all components and configurations.

This step assumes that the first admin user account is created with the browser.

- Install the W&B CLI:

- Log in to W&B:

wandb login --host=https://YOUR_DNS_DOMAIN

wandb login --host=https://wandb.company-name.com

- Verify the installation:

A successful installation and fully working W&B deployment shows the following output:

Default host selected: https://wandb.company-name.com

Find detailed logs for this test at: /var/folders/pn/b3g3gnc11_sbsykqkm3tx5rh0000gp/T/tmpdtdjbxua/wandb

Checking if logged in...................................................✅

Checking signed URL upload..............................................✅

Checking ability to send large payloads through proxy...................✅

Checking requests to base url...........................................✅

Checking requests made over signed URLs.................................✅

Checking CORs configuration of the bucket...............................✅

Checking wandb package version is up to date............................✅

Checking logged metrics, saving and downloading a file..................✅

Checking artifact save and download workflows...........................✅

Environment-specific considerations

Kubernetes is the same whether it runs on-premises or in the cloud. The main differences are in naming and managed services (for example, MySQL vs RDS, or S3 vs on-premises object storage). This section covers considerations that vary by environment.

When deploying on on-premises or bare-metal Kubernetes, pay attention to the following.

Load balancer configuration

On-premises Kubernetes clusters typically require manual load balancer configuration. Options include:

- External load balancer: Configure an existing hardware or software load balancer (F5, HAProxy, etc.)

- Nginx Ingress Controller: Deploy nginx-ingress-controller with NodePort or host networking

- MetalLB: For bare-metal Kubernetes clusters, MetalLB provides load balancer services

For detailed load balancer configuration examples, see the Reference Architecture networking section.

Persistent storage

Ensure your Kubernetes cluster has a StorageClass configured for persistent volumes. W&B components may require persistent storage for caching and temporary data.

Common on-premises storage options:

- NFS-based storage classes

- Ceph/Rook storage

- Local persistent volumes

- Enterprise storage solutions (NetApp, Pure Storage, etc.)

DNS and certificate management

For on-premises deployments:

- Configure internal DNS records to point to your W&B hostname

- Provision SSL/TLS certificates from your internal Certificate Authority (CA)

- If using self-signed certificates, configure the operator to trust your CA certificate

See the SSL/TLS requirements for certificate configuration details.

OpenShift deployments

W&B fully supports deployment on OpenShift Kubernetes clusters. OpenShift deployments require additional security context configurations due to OpenShift’s stricter security policies.

For OpenShift-specific configuration details, see OpenShift Kubernetes clusters above. For comprehensive OpenShift examples in air-gapped environments, see Deploy on Air-Gapped Kubernetes.

Object storage for on-premises and S3-compatible

After provisioning your object storage bucket (see Object storage provisioning), configure it in your W&B Custom Resource.

AWS S3 (on-premises)

For on-premises AWS S3 (via Outposts or compatible storage):

bucket:

kmsKey: <kms key arn> # Optional KMS key for encryption

name: <bucket name> # Example: wandb

path: "" # Keep as empty string

provider: s3

region: <region> # Example: us-east-1

bucket:

kmsKey: null

name: <s3 endpoint> # Example: s3.example.com:9000

path: <bucket name> # Example: wandb

provider: s3

region: <region> # Example: us-east-1

?tls=true to the bucket path:

bucket:

path: "wandb?tls=true"

The certificate must be trusted. Self-signed certificates require additional configuration. See the SSL/TLS requirements for details.

- Storage capacity and performance: Monitor disk capacity carefully. Average W&B usage results in tens to hundreds of gigabytes. Heavy usage could result in petabytes of storage consumption.

- Fault tolerance: At minimum, use RAID arrays for physical disks. For S3-compatible storage, use distributed or highly available configurations.

- Availability: Configure monitoring to ensure the storage remains available.

MinIO considerations

MinIO Open Source is in maintenance mode with no active development. Pre-compiled binaries are no longer provided, and only critical security fixes are considered case-by-case. For production deployments, W&B recommends using managed object storage services or MinIO Enterprise (AIStor). mc config host add local http://$MINIO_HOST:$MINIO_PORT "$MINIO_ACCESS_KEY" "$MINIO_SECRET_KEY" --api s3v4

mc mb --region=us-east-1 local/wandb-files

W&B recommends fully managed deployment options such as W&B Multi-tenant Cloud or W&B Dedicated Cloud deployment types. W&B fully managed services are simple and secure to use, with minimum to no configuration required. W&B recommends using the W&B Server AWS Terraform Module to deploy the platform on AWS.The Terraform Module deploys the following mandatory components:

- Load Balancer

- AWS Identity & Access Management (IAM)

- AWS Key Management System (KMS)

- Amazon Aurora MySQL

- Amazon VPC

- Amazon S3

- Amazon Route53

- Amazon Certificate Manager (ACM)

- Amazon Elastic Load Balancing (ALB)

- Amazon Secrets Manager

Optional components include:

- Elastic Cache for Redis

- SQS

Pre-requisite permissions

The account that runs Terraform needs to be able to create all components described above and permission to create IAM Policies and IAM Roles and assign roles to resources.General steps

The steps in this section are common for any deployment option.

-

Prepare the development environment.

- Install Terraform

- W&B recommend creating a Git repository for version control.

-

Create the

terraform.tfvars file.

The tvfars file content can be customized according to the installation type, but the minimum recommended will look like the example below.

namespace = "wandb"

license = "xxxxxxxxxxyyyyyyyyyyyzzzzzzz"

subdomain = "wandb-aws"

domain_name = "wandb.ml"

zone_id = "xxxxxxxxxxxxxxxx"

allowed_inbound_cidr = ["0.0.0.0/0"]

allowed_inbound_ipv6_cidr = ["::/0"]

eks_cluster_version = "1.29"

tvfars file before you deploy because the namespace variable is a string that prefixes all resources created by Terraform.

The combination of subdomain and domain forms the FQDN for your W&B instance. In the example above, the W&B FQDN will be wandb-aws.wandb.ml and the DNS zone_id where the FQDN record will be created.

Both allowed_inbound_cidr and allowed_inbound_ipv6_cidr also require setting. In the module, this is a mandatory input. The proceeding example permits access from any source to the W&B installation.

-

Create the file

versions.tf

This file will contain the Terraform and Terraform provider versions required to deploy W&B in AWS:

provider "aws" {

region = "eu-central-1"

default_tags {

tags = {

GithubRepo = "terraform-aws-wandb"

GithubOrg = "wandb"

Enviroment = "Example"

Example = "PublicDnsExternal"

}

}

}

-

Create the file

variables.tf

For every option configured in the terraform.tfvars Terraform requires a correspondent variable declaration.

variable "namespace" {

type = string

description = "Name prefix used for resources"

}

variable "domain_name" {

type = string

description = "Domain name used to access instance."

}

variable "subdomain" {

type = string

default = null

description = "Subdomain for accessing the Weights & Biases UI."

}

variable "license" {

type = string

}

variable "zone_id" {

type = string

description = "Domain for creating the Weights & Biases subdomain on."

}

variable "allowed_inbound_cidr" {

description = "CIDRs allowed to access wandb-server."

nullable = false

type = list(string)

}

variable "allowed_inbound_ipv6_cidr" {

description = "CIDRs allowed to access wandb-server."

nullable = false

type = list(string)

}

variable "eks_cluster_version" {

description = "EKS cluster kubernetes version"

nullable = false

type = string

}

Recommended deployment

This is the most straightforward deployment option configuration that creates all mandatory components and installs in the Kubernetes Cluster the latest version of W&B.

-

Create the

main.tf

In the same directory where you created the files in the General Steps, create a file main.tf with the following content:

module "wandb_infra" {

source = "wandb/wandb/aws"

version = "~>7.0"

namespace = var.namespace

domain_name = var.domain_name

license = var.license

subdomain = var.subdomain

zone_id = var.zone_id

allowed_inbound_cidr = var.allowed_inbound_cidr

allowed_inbound_ipv6_cidr = var.allowed_inbound_ipv6_cidr

public_access = true

external_dns = true

kubernetes_public_access = true

kubernetes_public_access_cidrs = ["0.0.0.0/0"]

eks_cluster_version = var.eks_cluster_version

}

data "aws_eks_cluster" "eks_cluster_id" {

name = module.wandb_infra.cluster_name

}

data "aws_eks_cluster_auth" "eks_cluster_auth" {

name = module.wandb_infra.cluster_name

}

provider "kubernetes" {

host = data.aws_eks_cluster.eks_cluster_id.endpoint

cluster_ca_certificate = base64decode(data.aws_eks_cluster.eks_cluster_id.certificate_authority.0.data)

token = data.aws_eks_cluster_auth.eks_cluster_auth.token

}

provider "helm" {

kubernetes {

host = data.aws_eks_cluster.eks_cluster_id.endpoint

cluster_ca_certificate = base64decode(data.aws_eks_cluster.eks_cluster_id.certificate_authority.0.data)

token = data.aws_eks_cluster_auth.eks_cluster_auth.token

}

}

output "url" {

value = module.wandb_infra.url

}

output "bucket" {

value = module.wandb_infra.bucket_name

}

-

Deploy W&B

To deploy W&B, execute the following commands:

terraform init

terraform apply -var-file=terraform.tfvars

Enable Redis

To use Redis to cache SQL queries and speed up the application response when loading metrics, add the option create_elasticache_subnet = true to the main.tf file:module "wandb_infra" {

source = "wandb/wandb/aws"

version = "~>7.0"

namespace = var.namespace

domain_name = var.domain_name

subdomain = var.subdomain

zone_id = var.zone_id

create_elasticache_subnet = true

}

[...]

Enable message broker (queue)

To enable an external message broker using SQS, add the option use_internal_queue = false to the main.tf file:This is optional because W&B includes an embedded broker. This option does not bring a performance improvement.

module "wandb_infra" {

source = "wandb/wandb/aws"

version = "~>7.0"

namespace = var.namespace

domain_name = var.domain_name

subdomain = var.subdomain

zone_id = var.zone_id

use_internal_queue = false

[...]

}

Additional resources

W&B recommends using the W&B Server Google Cloud Terraform Module to deploy the platform on Google Cloud.The module documentation is extensive and contains all available options that can be used.Before you start, W&B recommends that you choose one of the remote backends available for Terraform to store the State File. The State File is the necessary resource to roll out upgrades or make changes in your deployment without recreating all components.The Terraform Module deploys the following mandatory components:

- VPC

- Cloud SQL for MySQL

- Cloud Storage Bucket

- Google Kubernetes Engine

- Memorystore for Redis

- KMS Crypto Key

- Load Balancer

Optional components include:Prerequisite permissions

The account that will run Terraform needs to have the role roles/owner in the Google Cloud project used.General steps

The steps in this section are common for any deployment option.

-

Prepare the development environment.

- Install Terraform.

- W&B recommends creating a Git repository with the code that will be used, but you can keep your files locally.

- Create a project in Google Cloud Console.

- Authenticate with Google Cloud (make sure to install gcloud before) using

gcloud auth application-default login.

-

Create the

terraform.tfvars file.

The tvfars file content can be customized according to the installation type, but the minimum recommended will look like the example below.

project_id = "wandb-project"

region = "europe-west2"

zone = "europe-west2-a"

namespace = "wandb"

license = "xxxxxxxxxxyyyyyyyyyyyzzzzzzz"

subdomain = "wandb-gcp"

domain_name = "wandb.ml"

namespace variable will be a string that will prefix all resources created by Terraform.

The combination of subdomain and domain will form the FQDN that W&B will be configured. In the example above, the W&B FQDN will be wandb-gcp.wandb.ml.

-

Create the file

variables.tf.

For every option configured in the terraform.tfvars Terraform requires a correspondent variable declaration.

variable "project_id" {

type = string

description = "Project ID"

}

variable "region" {

type = string

description = "Google region"

}

variable "zone" {

type = string

description = "Google zone"

}

variable "namespace" {

type = string

description = "Namespace prefix used for resources"

}

variable "domain_name" {

type = string

description = "Domain name for accessing the Weights & Biases UI."

}

variable "subdomain" {

type = string

description = "Subdomain for access the Weights & Biases UI."

}

variable "license" {

type = string

description = "W&B License"

}

Recommended deployment

This is the most straightforward deployment option configuration that creates all mandatory components and installs in the Kubernetes Cluster the latest version of W&B.

-

Create the

main.tf

In the same directory where you created the files in the General Steps, create a file main.tf with the following content:

provider "google" {

project = var.project_id

region = var.region

zone = var.zone

}

provider "google-beta" {

project = var.project_id

region = var.region

zone = var.zone

}

data "google_client_config" "current" {}

provider "kubernetes" {

host = "https://${module.wandb.cluster_endpoint}"

cluster_ca_certificate = base64decode(module.wandb.cluster_ca_certificate)

token = data.google_client_config.current.access_token

}

provider "helm" {

kubernetes {

host = "https://${module.wandb.cluster_endpoint}"

cluster_ca_certificate = base64decode(module.wandb.cluster_ca_certificate)

token = data.google_client_config.current.access_token

}

}

# Spin up all required services

module "wandb" {

source = "wandb/wandb/google"

version = "~> 10.0"

namespace = var.namespace

license = var.license

domain_name = var.domain_name

subdomain = var.subdomain

}

# You'll want to update your DNS with the provisioned IP address

output "url" {

value = module.wandb.url

}

output "address" {

value = module.wandb.address

}

output "bucket_name" {

value = module.wandb.bucket_name

}

-

Deploy W&B.

To deploy W&B, execute the following commands:

terraform init

terraform apply -var-file=terraform.tfvars

Enable Redis

To use Redis to cache SQL queries and speed up the application response when loading metrics, add the option create_redis = true to the main.tf file:[...]

module "wandb" {

source = "wandb/wandb/google"

version = "~> 10.0"

namespace = var.namespace

license = var.license

domain_name = var.domain_name

subdomain = var.subdomain

create_redis = true

}

[...]

Enable message broker (queue)

To enable an external message broker using Pub/Sub, add the option use_internal_queue = false to the main.tf file:This is optional because W&B includes an embedded broker. This option does not bring a performance improvement.

[...]

module "wandb" {

source = "wandb/wandb/google"

version = "~> 10.0"

namespace = var.namespace

license = var.license

domain_name = var.domain_name

subdomain = var.subdomain

use_internal_queue = false

}

[...]

Additional resources

W&B recommends using the W&B Server Azure Terraform Module to deploy the platform on Azure.The module documentation is extensive and contains all available options that can be used.The Terraform Module deploys the following mandatory components:

- Azure Resource Group

- Azure Virtual Network (VPC)

- Azure MySQL Flexible Server

- Azure Storage Account & Blob Storage

- Azure Kubernetes Service

- Azure Application Gateway

Optional components include:

- Azure Cache for Redis

- Azure Event Grid

Pre-requisite permissions

The simplest way to get the AzureRM provider configured is via Azure CLI but in case of automation using Azure Service Principal can also be useful.Regardless of the authentication method used, the account that will run Terraform needs to be able to create all components described above.General steps

The steps in this section are common for any deployment option.

-

Prepare the development environment.

- Install Terraform

- W&B recommends creating a Git repository with the code that will be used, but you can keep your files locally.

-

Create the

terraform.tfvars file.

The tvfars file content can be customized according to the installation type, but the minimum recommended will look like the example below.

namespace = "wandb"

wandb_license = "xxxxxxxxxxyyyyyyyyyyyzzzzzzz"

subdomain = "wandb-azure"

domain_name = "wandb.ml"

location = "westeurope"

namespace variable will be a string that will prefix all resources created by Terraform.

The combination of subdomain and domain will form the FQDN that W&B will be configured. In the example above, the W&B FQDN will be wandb-azure.wandb.ml.

-

Create the file

versions.tf

This file will contain the Terraform and Terraform provider versions required to deploy W&B in Azure:

terraform {

required_version = "~> 1.3"

required_providers {

azurerm = {

source = "hashicorp/azurerm"

version = "~> 3.17"

}

}

}

-

Create the file

variables.tf

For every option configured in the terraform.tfvars Terraform requires a correspondent variable declaration.

variable "namespace" {

type = string

description = "String used for prefix resources."

}

variable "location" {

type = string

description = "Azure Resource Group location"

}

variable "domain_name" {

type = string

description = "Domain for accessing the Weights & Biases UI."

}

variable "subdomain" {

type = string

default = null

description = "Subdomain for accessing the Weights & Biases UI. Default creates record at Route53 Route."

}

variable "license" {

type = string

description = "Your wandb/local license"

}

Recommended deployment

This is the most straightforward deployment option configuration that creates all mandatory components and installs in the Kubernetes Cluster the latest version of W&B.

-

Create the

main.tf

In the same directory where you created the files in the General Steps, create a file main.tf with the following content:

provider "azurerm" {

features {}

}

provider "kubernetes" {

host = module.wandb.cluster_host

cluster_ca_certificate = base64decode(module.wandb.cluster_ca_certificate)

client_key = base64decode(module.wandb.cluster_client_key)

client_certificate = base64decode(module.wandb.cluster_client_certificate)

}

provider "helm" {

kubernetes {

host = module.wandb.cluster_host

cluster_ca_certificate = base64decode(module.wandb.cluster_ca_certificate)

client_key = base64decode(module.wandb.cluster_client_key)

client_certificate = base64decode(module.wandb.cluster_client_certificate)

}

}

# Spin up all required services

module "wandb" {

source = "wandb/wandb/azurerm"

version = "~> 1.2"

namespace = var.namespace

location = var.location

license = var.license

domain_name = var.domain_name

subdomain = var.subdomain

deletion_protection = false

tags = {

"Example" : "PublicDns"

}

}

output "address" {

value = module.wandb.address

}

output "url" {

value = module.wandb.url

}

-

Deploy W&B

To deploy W&B, execute the following commands:

terraform init

terraform apply -var-file=terraform.tfvars

Enable Redis

To use Redis to cache SQL queries and speed up the application response when loading metrics, add the option create_redis = true to the main.tf file:# Spin up all required services

module "wandb" {

source = "wandb/wandb/azurerm"

version = "~> 1.2"

namespace = var.namespace

location = var.location

license = var.license

domain_name = var.domain_name

subdomain = var.subdomain

create_redis = true

[...]

}

Enable message broker (queue)

To enable an external message broker using Azure Event Grid, add the option use_internal_queue = false to the main.tf file:This is optional because W&B includes an embedded broker. This option does not bring a performance improvement.

# Spin up all required services

module "wandb" {

source = "wandb/wandb/azurerm"

version = "~> 1.2"

namespace = var.namespace

location = var.location

license = var.license

domain_name = var.domain_name

subdomain = var.subdomain

use_internal_queue = false

[...]

}

Additional resources

Other deployment options

You can combine multiple deployment options by adding all configurations to the same file. Each Terraform module provides several options that can be combined with the standard options and the minimal configuration found in the recommended deployment section.

Refer to the module documentation for your cloud provider for the full list of available options:

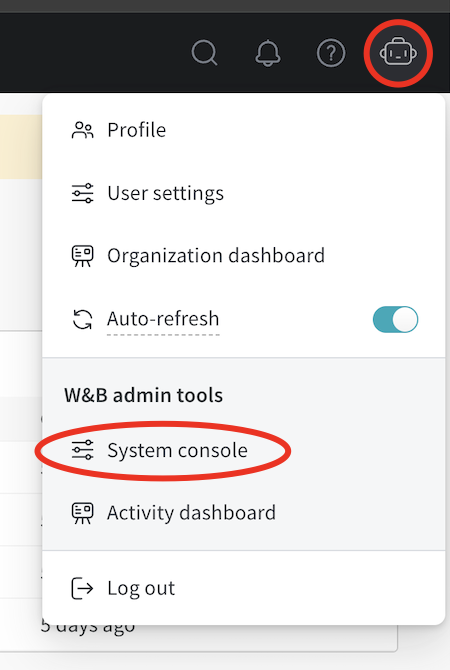

Access the W&B Management Console

The W&B Kubernetes operator comes with a management console. It is located at ${HOST_URI}/console, for example https://wandb.company-name.com/console.

There are two ways to log in to the management console:

Option 1 (Recommended)

Option 2

-

Open the W&B application in the browser and log in. Log in to the W&B application with

${HOST_URI}/, for example https://wandb.company-name.com/

-

Access the console. Click on the icon in the top right corner and then click System console. Only users with admin privileges can see the System console entry.

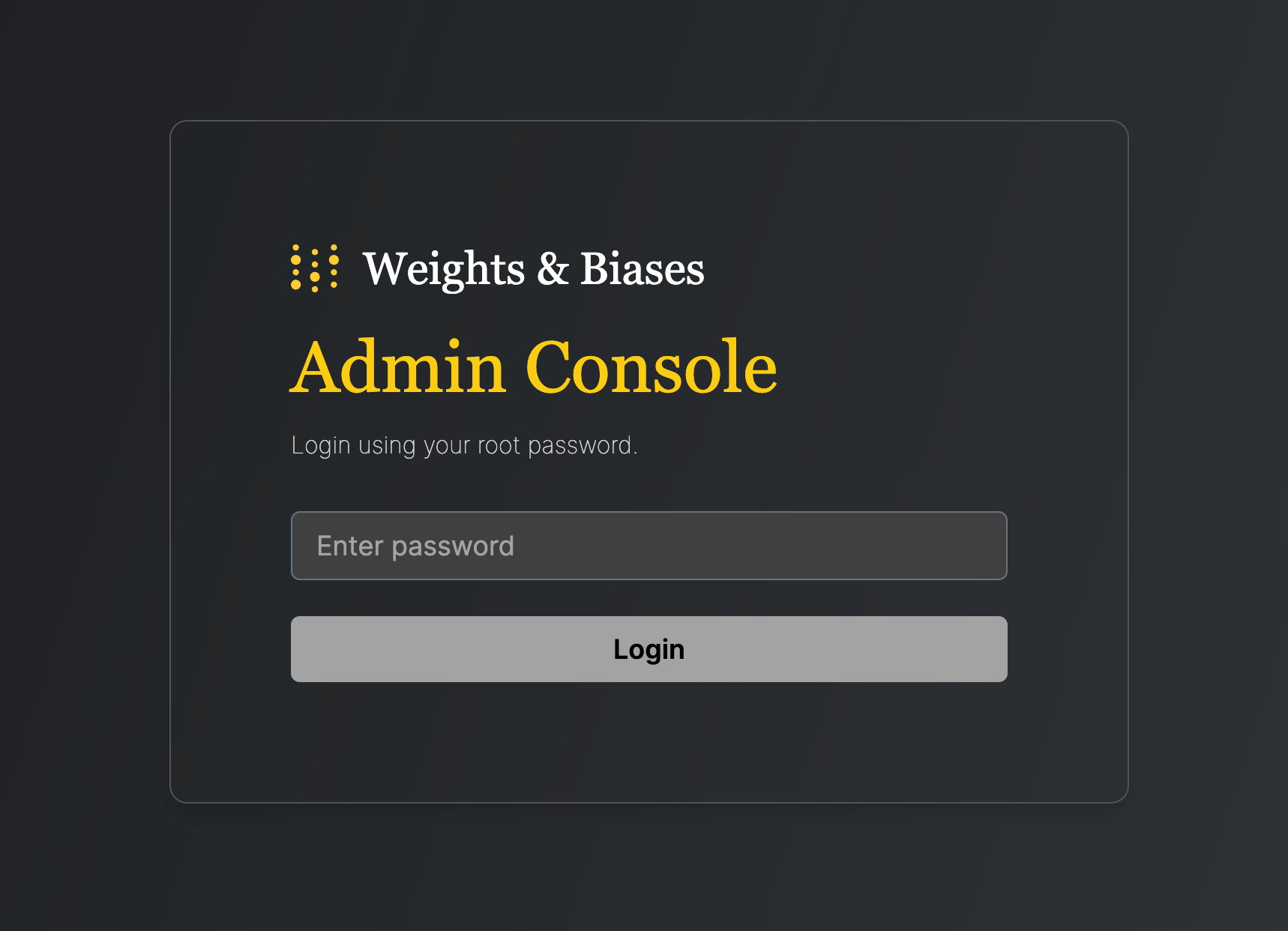

W&B recommends you access the console using the following steps only if Option 1 does not work.

- Open console application in browser. Open the above described URL, which redirects you to the login screen:

- Retrieve the password from the Kubernetes secret that the installation generates:

kubectl get secret wandb-password -o jsonpath='{.data.password}' | base64 -d

- Log in to the console. Paste the copied password, then click Login.

Update the W&B Kubernetes operator

This section describes how to update the W&B Kubernetes operator.

- Updating the W&B Kubernetes operator does not update the W&B server application.

- See the instructions here if you use a Helm chart that does not user the W&B Kubernetes operator before you follow the proceeding instructions to update the W&B operator.

-

First, update the repo with

helm repo update:

-

Next, update the Helm chart with

helm upgrade:

helm upgrade operator wandb/operator -n wandb-cr --reuse-values

Update the W&B Server application

You no longer need to update W&B Server application if you use the W&B Kubernetes operator.

The operator automatically updates your W&B Server application when a new version of the software of W&B is released.

Migrate Self-Managed instances to W&B Operator

The proceeding section describe how to migrate from self-managing your own W&B Server installation to using the W&B Operator to do this for you. The migration process depends on how you installed W&B Server:

The W&B Operator is the default and recommended installation method for W&B Server. Reach out to Customer Support or your W&B team if you have any questions. Migrate to Operator-based Helm chart

Follow these steps to migrate to the Operator-based Helm chart:

-

Get the current W&B configuration. If W&B was deployed with an non-operator-based version of the Helm chart, export the values like this:

If W&B was deployed with Kubernetes manifests, export the values like this:

kubectl get deployment wandb -o yaml

-

Create a file called

operator.yaml. Follow the format described in the Configuration Reference. Use the values from step 1.

-

Scale the current deployment to 0 pods. This step is stops the current deployment.

kubectl scale --replicas=0 deployment wandb

-

Update the Helm chart repo:

-

Install the new Helm chart:

helm upgrade --install operator wandb/operator -n wandb-cr --create-namespace

-

Configure the new helm chart and trigger W&B application deployment. Apply the new configuration.

kubectl apply -f operator.yaml

-

Verify the installation. Make sure that everything works by following the steps in Verify the installation.

-

Remove to old installation. Uninstall the old helm chart or delete the resources that were created with manifests.

Follow these steps to migrate to the Operator-based Helm chart:

- Prepare Terraform config. Replace the Terraform code from the old deployment in your Terraform config with the one that is described here. Set the same variables as before. Do not change .tfvars file if you have one.

- Execute Terraform run. Execute terraform init, plan and apply

- Verify the installation. Make sure that everything works by following the steps in Verify the installation.

- Remove to old installation. Uninstall the old helm chart or delete the resources that were created with manifests.

Configuration Reference for W&B Server

This section describes the configuration options for W&B Server application. The application receives its configuration as custom resource definition named WeightsAndBiases. Some configuration options are exposed with the below configuration, some need to be set as environment variables.

The documentation has two lists of environment variables: basic and advanced. Only use environment variables if the configuration option that you need is not exposed using the Helm Chart.

Basic example

This example defines the minimum set of values required for W&B. For a more realistic production example, see Complete example.

This YAML file defines the desired state of your W&B deployment, including the version, environment variables, external resources like databases, and other necessary settings.

apiVersion: apps.wandb.com/v1

kind: WeightsAndBiases

metadata:

labels:

app.kubernetes.io/name: weightsandbiases

app.kubernetes.io/instance: wandb

name: wandb

namespace: default

spec:

values:

global:

host: https://<HOST_URI>

license: eyJhbGnUzaH...j9ZieKQ2x5GGfw

bucket:

<details depend on the provider>

mysql:

<redacted>

ingress:

annotations:

<redacted>

Complete example

This example configuration deploys W&B to Google Cloud Anthos using Google Cloud Storage:

apiVersion: apps.wandb.com/v1

kind: WeightsAndBiases

metadata:

labels:

app.kubernetes.io/name: weightsandbiases

app.kubernetes.io/instance: wandb

name: wandb

namespace: default

spec:

values:

global:

host: https://abc-wandb.sandbox-gcp.wandb.ml

bucket:

name: abc-wandb-moving-pipefish

provider: gcs

mysql:

database: wandb_local

host: 10.218.0.2

name: wandb_local

password: 8wtX6cJHizAZvYScjDzZcUarK4zZGjpV

port: 3306

user: wandb

redis:

host: redis.example.com

port: 6379

password: password

api:

enabled: true

glue:

enabled: true

executor:

enabled: true

license: eyJhbGnUzaHgyQjQyQWhEU3...ZieKQ2x5GGfw

ingress:

annotations:

ingress.gcp.kubernetes.io/pre-shared-cert: abc-wandb-cert-creative-puma

kubernetes.io/ingress.class: gce

kubernetes.io/ingress.global-static-ip-name: abc-wandb-operator-address

Host

# Provide the FQDN with protocol

global:

# example host name, replace with your own

host: https://wandb.example.com

Object storage (bucket)

AWS

global:

bucket:

provider: "s3"

name: ""

kmsKey: ""

region: ""

global:

bucket:

provider: "gcs"

name: ""

global:

bucket:

provider: "az"

name: ""

secretKey: ""

global:

bucket:

# Example values, replace with your own

provider: s3

name: storage.example.com

kmsKey: null

path: wandb

region: default

accessKey: 5WOA500...P5DK7I

secretKey: HDKYe4Q...JAp1YyjysnX

kmsKey must be null.

To reference accessKey and secretKey from a secret:

global:

bucket:

# Example values, replace with your own

provider: s3

name: storage.example.com

kmsKey: null

path: wandb

region: default

secret:

secretName: bucket-secret

accessKeyName: ACCESS_KEY

secretKeyName: SECRET_KEY

MySQL

global:

mysql:

# Example values, replace with your own

host: db.example.com

port: 3306

database: wandb_local

user: wandb

password: 8wtX6cJH...ZcUarK4zZGjpV

password from a secret:

global:

mysql:

# Example values, replace with your own

host: db.example.com

port: 3306

database: wandb_local

user: wandb

passwordSecret:

name: database-secret

passwordKey: MYSQL_WANDB_PASSWORD

License

global:

# Example license, replace with your own

license: eyJhbGnUzaHgyQjQy...VFnPS_KETXg1hi

license from a secret:

global:

licenseSecret:

name: license-secret

key: CUSTOMER_WANDB_LICENSE

Ingress

To identify the ingress class, see this FAQ entry.

Without TLS

global:

# IMPORTANT: Ingress is on the same level in the YAML as 'global' (not a child)

ingress:

class: ""

kubectl create secret tls wandb-ingress-tls --key wandb-ingress-tls.key --cert wandb-ingress-tls.crt

global:

# IMPORTANT: Ingress is on the same level in the YAML as 'global' (not a child)

ingress:

class: ""

annotations:

{}

# kubernetes.io/ingress.class: nginx

# kubernetes.io/tls-acme: "true"

tls:

- secretName: wandb-ingress-tls

hosts:

- <HOST_URI>

ingress:

annotations:

nginx.ingress.kubernetes.io/proxy-body-size: 0

Custom Kubernetes ServiceAccounts

Specify custom Kubernetes service accounts to run the W&B pods.

The following snippet creates a service account as part of the deployment with the specified name:

app:

serviceAccount:

name: custom-service-account

create: true

parquet:

serviceAccount:

name: custom-service-account

create: true

global:

...

create: false:

app:

serviceAccount:

name: custom-service-account

create: false

parquet:

serviceAccount:

name: custom-service-account

create: false

global:

...

app:

serviceAccount:

name: custom-service-account

create: true

console:

serviceAccount:

name: custom-service-account

create: true

global:

...

app:

serviceAccount:

name: custom-service-account

create: false

console:

serviceAccount:

name: another-custom-service-account

create: true

global:

...

External Redis

redis:

install: false

global:

redis:

host: ""

port: 6379

password: ""

parameters: {}

caCert: ""

password from a secret:

kubectl create secret generic redis-secret --from-literal=redis-password=supersecret

redis:

install: false

global:

redis:

host: redis.example

port: 9001

auth:

enabled: true

secret: redis-secret

key: redis-password

LDAP

LDAP configuration support in the current Helm chart is limited. Contact W&B Support or your AISE for assistance configuring LDAP.

global.extraEnv:

global:

extraEnv:

LDAP_ADDRESS: ldaps://ldap.company.example.com

LDAP_BASE_DN: cn=accounts,dc=company,dc=example,dc=com

LDAP_USER_BASE_DN: cn=users,cn=accounts,dc=company,dc=example,dc=com

LDAP_GROUP_BASE_DN: cn=groups,cn=accounts,dc=company,dc=example,dc=com

LDAP_BIND_DN: uid=ldapbind,cn=sysaccounts,cn=etc,dc=company,dc=example,dc=com

LDAP_BIND_PW: ********************

LDAP_ATTRIBUTES: email=mail,name=cn

LDAP_TLS_ENABLE: "true"

LDAP_LOGIN: "true"

LDAP_USER_OBJECT_CLASS: user

LDAP_GROUP_OBJECT_CLASS: group

OIDC SSO

global:

auth:

sessionLengthHours: 720

oidc:

clientId: ""

secret: ""

# Only include if your IdP requires it.

authMethod: ""

issuer: ""

authMethod is optional.

SMTP

global:

email:

smtp:

host: ""

port: 587

user: ""

password: ""

Environment variables

global:

extraEnv:

GLOBAL_ENV: "example"

Custom certificate authority

customCACerts is a list and can take many certificates. Certificate authorities specified in customCACerts only apply to the W&B Server application.

global:

customCACerts:

- |

-----BEGIN CERTIFICATE-----

MIIBnDCCAUKgAwIBAg.....................fucMwCgYIKoZIzj0EAwIwLDEQ

MA4GA1UEChMHSG9tZU.....................tZUxhYiBSb290IENBMB4XDTI0

MDQwMTA4MjgzMFoXDT.....................oNWYggsMo8O+0mWLYMAoGCCqG

SM49BAMCA0gAMEUCIQ.....................hwuJgyQRaqMI149div72V2QIg

P5GD+5I+02yEp58Cwxd5Bj2CvyQwTjTO4hiVl1Xd0M0=

-----END CERTIFICATE-----

- |

-----BEGIN CERTIFICATE-----

MIIBxTCCAWugAwIB.......................qaJcwCgYIKoZIzj0EAwIwLDEQ

MA4GA1UEChMHSG9t.......................tZUxhYiBSb290IENBMB4XDTI0

MDQwMTA4MjgzMVoX.......................UK+moK4nZYvpNpqfvz/7m5wKU

SAAwRQIhAIzXZMW4.......................E8UFqsCcILdXjAiA7iTluM0IU

aIgJYVqKxXt25blH/VyBRzvNhViesfkNUQ==

-----END CERTIFICATE-----

global:

caCertsConfigMap: custom-ca-certs

apiVersion: v1

kind: ConfigMap

metadata:

name: custom-ca-certs

data:

ca-cert1.crt: |

-----BEGIN CERTIFICATE-----

...

-----END CERTIFICATE-----

ca-cert2.crt: |

-----BEGIN CERTIFICATE-----

...

-----END CERTIFICATE-----

If using a ConfigMap, each key in the ConfigMap must end with .crt (for example, my-cert.crt or ca-cert1.crt). This naming convention is required for update-ca-certificates to parse and add each certificate to the system CA store.

Custom security context

Each W&B component supports custom security context configurations of the following form:

pod:

securityContext:

runAsNonRoot: true

runAsUser: 1001

runAsGroup: 0

fsGroup: 1001

fsGroupChangePolicy: Always

seccompProfile:

type: RuntimeDefault

container:

securityContext:

capabilities:

drop:

- ALL

readOnlyRootFilesystem: false

allowPrivilegeEscalation: false

The only valid value for runAsGroup: is 0. Any other value is an error.

app to your configuration:

global:

...

app:

pod:

securityContext:

runAsNonRoot: true

runAsUser: 1001

runAsGroup: 0

fsGroup: 1001

fsGroupChangePolicy: Always

seccompProfile:

type: RuntimeDefault

container:

securityContext:

capabilities:

drop:

- ALL

readOnlyRootFilesystem: false

allowPrivilegeEscalation: false

console, weave, weave-trace and parquet.

Configuration reference for W&B Operator

This section describes configuration options for W&B Kubernetes operator (wandb-controller-manager). The operator receives its configuration in the form of a YAML file.

By default, the W&B Kubernetes operator does not need a configuration file. Create a configuration file if required. For example, you might need a configuration file to specify custom certificate authorities, deploy in an air gap environment and so forth.

Find the full list of spec customization in the Helm repository.

Custom CA

A custom certificate authority (customCACerts), is a list and can take many certificates. Those certificate authorities when added only apply to the W&B Kubernetes operator (wandb-controller-manager).

customCACerts:

- |

-----BEGIN CERTIFICATE-----

MIIBnDCCAUKgAwIBAg.....................fucMwCgYIKoZIzj0EAwIwLDEQ

MA4GA1UEChMHSG9tZU.....................tZUxhYiBSb290IENBMB4XDTI0

MDQwMTA4MjgzMFoXDT.....................oNWYggsMo8O+0mWLYMAoGCCqG

SM49BAMCA0gAMEUCIQ.....................hwuJgyQRaqMI149div72V2QIg

P5GD+5I+02yEp58Cwxd5Bj2CvyQwTjTO4hiVl1Xd0M0=

-----END CERTIFICATE-----

- |

-----BEGIN CERTIFICATE-----

MIIBxTCCAWugAwIB.......................qaJcwCgYIKoZIzj0EAwIwLDEQ

MA4GA1UEChMHSG9t.......................tZUxhYiBSb290IENBMB4XDTI0

MDQwMTA4MjgzMVoX.......................UK+moK4nZYvpNpqfvz/7m5wKU

SAAwRQIhAIzXZMW4.......................E8UFqsCcILdXjAiA7iTluM0IU

aIgJYVqKxXt25blH/VyBRzvNhViesfkNUQ==

-----END CERTIFICATE-----

caCertsConfigMap: custom-ca-certs

apiVersion: v1

kind: ConfigMap

metadata:

name: custom-ca-certs

data:

ca-cert1.crt: |

-----BEGIN CERTIFICATE-----

...

-----END CERTIFICATE-----

ca-cert2.crt: |

-----BEGIN CERTIFICATE-----

...

-----END CERTIFICATE-----

Each key in the ConfigMap must end with .crt (for example, my-cert.crt or ca-cert1.crt). This naming convention is required for update-ca-certificates to parse and add each certificate to the system CA store.

FAQ

What is the purpose/role of each individual pod?

wandb-app: the core of W&B, including the GraphQL API and frontend application. It powers most of our platform’s functionality.wandb-console: the administration console, accessed via /console.wandb-otel: the OpenTelemetry agent, which collects metrics and logs from resources at the Kubernetes layer for display in the administration console.wandb-prometheus: the Prometheus server, which captures metrics from various components for display in the administration console.wandb-parquet: a backend microservice separate from the wandb-app pod that exports database data to object storage in Parquet format.wandb-weave: another backend microservice that loads query tables in the UI and supports various core app features.wandb-weave-trace: a framework for tracking, experimenting with, evaluating, deploying, and improving LLM-based applications. The framework is accessed via the wandb-app pod.

How to get the W&B Operator Console password

See Accessing the W&B Kubernetes Operator Management Console.

How to access the W&B Operator Console if Ingress doesn’t work

Execute the following command on a host that can reach the Kubernetes cluster:

kubectl port-forward svc/wandb-console 8082

https://localhost:8082/ console.

See Accessing the W&B Kubernetes Operator Management Console on how to get the password (Option 2).

How to view W&B Server logs

The application pod is named wandb-app-xxx.

kubectl get pods

kubectl logs wandb-XXXXX-XXXXX

How to identify the Kubernetes ingress class

You can get the ingress class installed in your cluster by running